Friday, January 16th 2015

GeForce GTX 960 3DMark Numbers Emerge

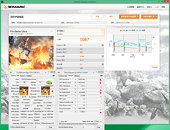

Ahead of its January 22nd launch, Chinese PC community PCEVA members leaked performance figures of NVIDIA's GeForce GTX 960. The card was installed on a test-bed driven by a Core i7-4770K overclocked to 4.50 GHz. The card itself appears to be factory-overclocked, if these specs are to believed. The card scored P9960 and X3321 in the performance and extreme presets of 3DMark 11, respectively. On standard 3DMark FireStrike, the card scored 6636 points. With some manual overclocking thrown in, it managed to score 7509 points in the same test. 3DMark Extreme (1440p) was harsh on this card, it scored 3438 points. 3DMark Ultra was too much for the card to chew, and it could only manage 1087 points. Looking at these numbers, the GTX 960 could be an interesting offering for Full HD (1920 x 1080) gaming, not a pixel more.

Source:

PCEVA Forums

98 Comments on GeForce GTX 960 3DMark Numbers Emerge

Based on TPU's performance summary charts, 1/2x a 980 would be right at 760 level performance. 2x a 750 would be right at 770 level... but the bandwidth is only 40% higher. It's possible that the 750 has more bandwidth than it needs, but I know that overclocking the ram results in a significant improvement in fps. I don't understand how video cards work that well, but unless Nvidia has done additional optimizations on the GM206, seems like it would be close to a 760... which would be lame for $200. I also believe it will consume a lot less than 120W with base clocks... more like 90W, based on the 980 and 750.

I think the ram quantity of 2GB will make it a poor SLI choice, but if you can get a 4GB model they should SLI between the 970 and 980.

You cannot warm a room up with 300 W or even a 600 W heating. :laugh: :D

Meanwhile, site owner personal experience with 290X Crossfire:

If you are so inclined, I will invite you to my home, we will take a random room, will cool it to ambient temperature of 17-18 degrees Celsius and I will give you a permission to use my rig with two R9 290X.

If you succeed with warming the room, I will admit, I was wrong.

Until then, I will laugh at you, and not even take into consideration those stupid comparisons in hot climate.

In hot climate, even smoking a cigarette feels unpleasant. :rolleyes:

In a closed system the addition of heat energy will elevate the temperature of the space it occupies - this is (very) basic physics.You're assuming that the offer doesn't include a personal air conditioning fan?

Two 290Xs won't be enough. :DOoo, and please, do not mention my mom!!! Because, as far as I remember, I haven't mentioned yours! :rolleyes:

It will vary hugely depending on how thermally isolated the room is, it's thermal capacitance, its size, how long you are heating it, and the starting temperature, and whether you will perceive a temperature rise as an issue (if it's winter and you keep your house at 65, then no problem... if it's summer and it's already 90, then yes). If the room is perfectly insulated, even 1W would eventually send the temperature to infinity. But most rooms are not well insulated from each other, doors are not sealed (or are even open), and air is circulated via heating and air conditioning systems.

My wife has a little electric resistance heater. It's 1500W. It can heat a sealed small room >+10F in an hour. 500W for 3 hrs would be similar. My Dad's 3000 sq ft 50 year old house uses electric resistance heat. In winter his average consumption is 5000W... that's to heat the whole house 40+F over ambient, steady state. If he had 10 x500W systems cranking 24/7 he could get rid of the furnace.

So yes, the heat given off by a computer can be significant. It is also usually not that hard to make it a non-issue.

Getting back to the 960, I don't think it will be near 120W unless it is over clocked a lot. It's half of a 980 and a little less than double a 750. Should be <100W.

I'd want to understand the construction and outside ambient they factor. While a fan in the right conditions could create an increase, 9°F in an hour or 13% hard to fathom? Especially with something more than the air movement of table fan those a about 10-25W? Most modern ceiling fans use less than an amp, averaging between 0.5 and 1 amp, 10-50W being customary. Though the air circulation within of a completely sealed room is there other aspects to account for like a human body... as why leave the fan on in the first place.

I’m not sure of how they arrived at a 13% increase over normal [72°] room in just one hour unless the room is effected additional conduction from outside ambient, or body heat adding additional thermal load. By shear calculation you might say the volume of air within a sealed “room” with 40W load (light bulb ~36 Joules/sec) would produce a rise, but you'd be lucky to see 4-5°F in an hour. Considering there's air movement with the fan, that influences the energy/heat that would be dissipated/absorbed by the surface of walls as effected by the outside ambient.

Ok, might as well just calculate it for a perfectly sealed and insulated room. Thermal capacitance of air ~1.00 KJ/KG-K. Density ~1.2 KG/m^3. The 12x12x8 room will have 1152 ft^3 or 33m^3. Total mass of air is 33x1.2= 40 KG. 40W for 1 hr is 40x60x60 = 144 KJ. Temperature rise = 144/40 = 3.6 K or 6.5 F.

I don't get a 9 F rise even with bogus idealized assumptions.

Having AC or airflow in the room might alleviate or negate entirely the effects, but I'm pretty certain that having the system parked under the desk amplifies the effects. Airflow and AC are also dependant upon these being available - which might not be a given for a lot of users.

The user experience is of course particular to the person, but I'm pretty sure that anecdotal evidence points to a significant percentage of users affected. My 780 SLI system uses close to a 290X Crossfire setup ( ~700-750W), and I'm pretty sure I notice the difference between idle and 3D load. BTW, the local ambient temp here is 18°C at 9:10 a.m. with winds of <2km/h, which is about average for late/overnight/early morning during summer and autumn.That might be a little conservative. The GTX 970/980's TDP seems based on base clock, which in actuality is rarely, if ever, the limit of its reference core frequency. Two other factors to take into consideration are that the 960 is clocked at least 100MHz higher (core) and uses 4GB of 7Gbps GDDR5 (@1.55V) rather than 2GB of 5Gbps (@1.35V) found in the GTX 750/750 Ti.

I have no doubt that Nvidia will quote the base clock TDP for the card for marketing purposes, but I wouldn't be overly surprised to see the card use 20W or so more in real-world usage for 3D loading.