Intel Builds World's Largest Neuromorphic System to Enable More Sustainable AI

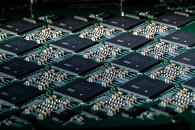

Today, Intel announced that it has built the world's largest neuromorphic system. Code-named Hala Point, this large-scale neuromorphic system, initially deployed at Sandia National Laboratories, utilizes Intel's Loihi 2 processor, aims at supporting research for future brain-inspired artificial intelligence (AI), and tackles challenges related to the efficiency and sustainability of today's AI. Hala Point advances Intel's first-generation large-scale research system, Pohoiki Springs, with architectural improvements to achieve over 10 times more neuron capacity and up to 12 times higher performance.

"The computing cost of today's AI models is rising at unsustainable rates. The industry needs fundamentally new approaches capable of scaling. For that reason, we developed Hala Point, which combines deep learning efficiency with novel brain-inspired learning and optimization capabilities. We hope that research with Hala Point will advance the efficiency and adaptability of large-scale AI technology." -Mike Davies, director of the Neuromorphic Computing Lab at Intel Labs

"The computing cost of today's AI models is rising at unsustainable rates. The industry needs fundamentally new approaches capable of scaling. For that reason, we developed Hala Point, which combines deep learning efficiency with novel brain-inspired learning and optimization capabilities. We hope that research with Hala Point will advance the efficiency and adaptability of large-scale AI technology." -Mike Davies, director of the Neuromorphic Computing Lab at Intel Labs