- Joined

- Oct 9, 2007

- Messages

- 47,635 (7.44/day)

- Location

- Dublin, Ireland

| System Name | RBMK-1000 |

|---|---|

| Processor | AMD Ryzen 7 5700G |

| Motherboard | Gigabyte B550 AORUS Elite V2 |

| Cooling | DeepCool Gammax L240 V2 |

| Memory | 2x 16GB DDR4-3200 |

| Video Card(s) | Galax RTX 4070 Ti EX |

| Storage | Samsung 990 1TB |

| Display(s) | BenQ 1440p 60 Hz 27-inch |

| Case | Corsair Carbide 100R |

| Audio Device(s) | ASUS SupremeFX S1220A |

| Power Supply | Cooler Master MWE Gold 650W |

| Mouse | ASUS ROG Strix Impact |

| Keyboard | Gamdias Hermes E2 |

| Software | Windows 11 Pro |

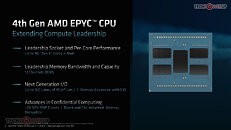

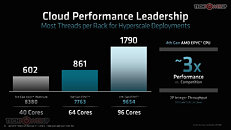

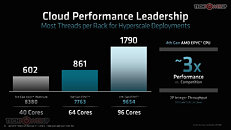

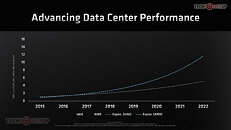

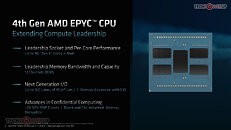

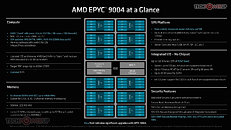

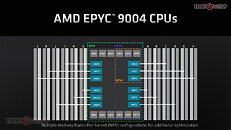

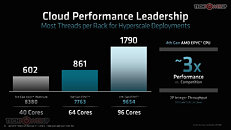

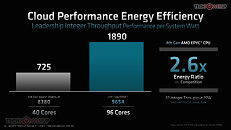

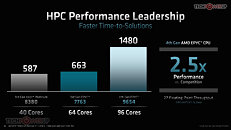

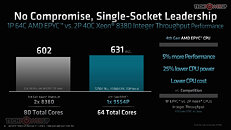

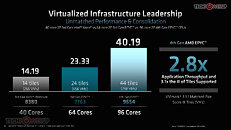

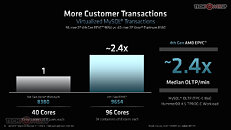

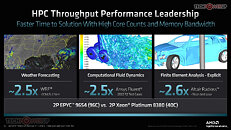

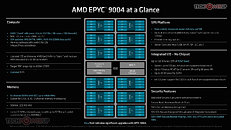

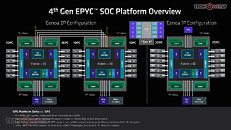

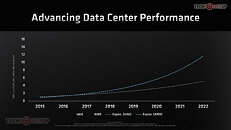

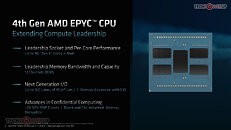

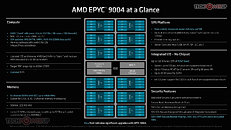

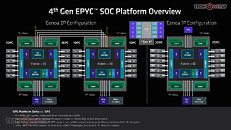

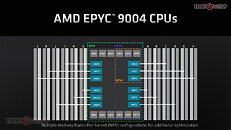

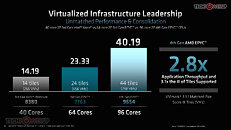

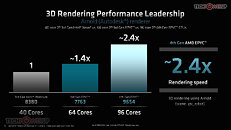

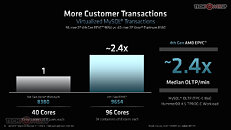

AMD at a special media event titled "together we advance_data centers," formally launched its 4th generation EPYC "Genoa" server processors based on the "Zen 4" microarchitecture. These processors debut an all new platform, with modern I/O connectivity that includes PCI-Express Gen 5, CXL, and DDR5 memory. The processors come in CPU core-counts of up to 96-core/192-thread. There are as many as 18 processor SKUs, differentiated not just in CPU core-counts, but also the way the the cores are spread across the up to 12 "Zen 4" chiplets (CCDs). Each chiplet features up to 8 "Zen 4" CPU cores, depending on the model; up to 32 MB of L3 cache, and is built on the 5 nm EUV process at TSMC. The CCDs talk to a centralized server I/O die (sIOD), which is built on the 6 nm process.

The processors AMD is launching today are the EPYC "Genoa" series, targeting general purpose servers, although they can be deployed in large cloud data-centers, too. To large-scale cloud providers such as AWS, Azure, and Google Cloud, AMD is readying a different class of processor, codenamed "Bergamo," which is plans to launch later. In 2023, the company will launch the "Genoa-X" line of processor for technical-compute and HPC applications, which benefit from large on-die caches, as they feature the 3D Vertical Cache technology. There will also be "Siena," a class of EPYC processors targeting the telecom and edge-computing markets, which could see an integration of more Xilinx IP.

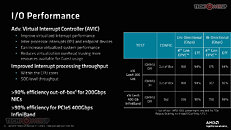

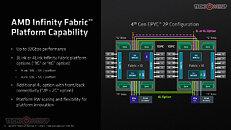

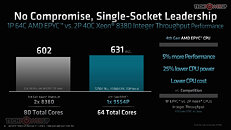

The EPYC "Genoa" processor, as we mentioned, comes in core-counts of up to 96-core/192-thread, dominating the 40-core/80-thread counts of the 3rd Gen Xeon Scalable "Ice Lake-SP," and also staying ahead of the 60-core/120-thread counts of the upcoming Xeon Scalable "Sapphire Rapids." The new AMD processor also sees a significant buff of its I/O capabilities, featuring a 12-channel (24 sub-channel) DDR5 memory interface, and a gargantuan 160-lane PCI-Express Gen 5 interface (that's ten Gen 5 x16 slots running at full bandwidth). and platform support for CXL and 2P xGMI links by subtracting some of those multipurpose lanes.

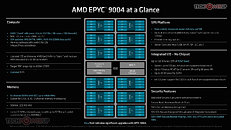

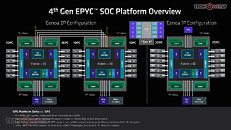

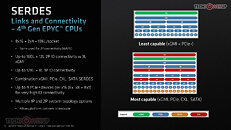

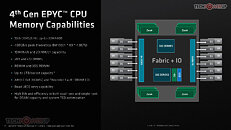

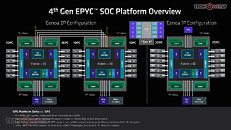

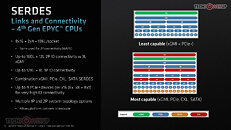

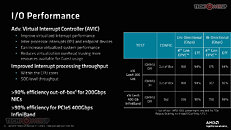

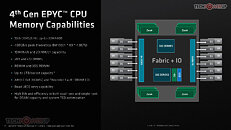

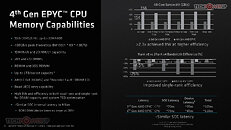

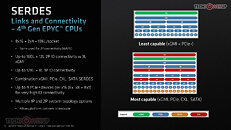

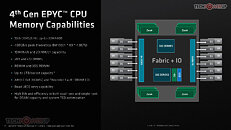

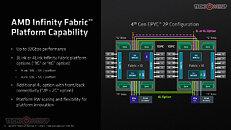

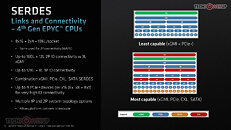

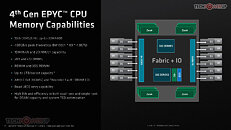

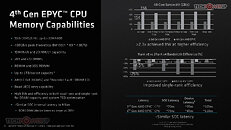

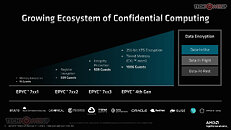

The new 6 nm server I/O die (sIOD) has a significantly higher transistor count than the 12 nm one powering past-gen EPYC processors. The high transistor count is due to two large 80-lane configurable SERDES (serializer-deserializer) components, which can be made to put out PCIe Gen 5 lanes, CXL 1.1 lanes, SATA 6 Gbps ports, or even the inter-socket Infinity Fabric enabling 2P platforms. The processor supports up to 64 CXL 1.1 lanes that can be used to connect to networked memory-pooling devices. 3rd generation Infinity Fabric connects the various components inside the sIOD, the sIOD to the twelve "Zen 4" CCDs via IFOP, and as an inter-socket interconnect. The processor features a 12-channel (24 x 40-bit sub-channels) memory interface, which supports up to 6 TB of ECC DDR5-4800 memory per socket. The latest generation Secure Processor provides SEV-SNP (secure nested paging), and AES-256-XTS, for a larger number of secure VMs.

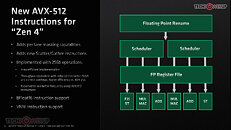

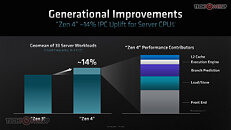

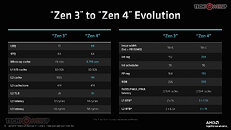

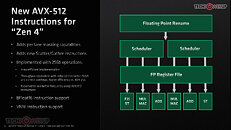

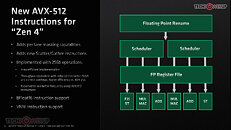

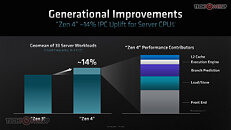

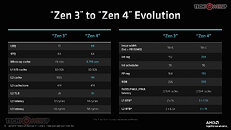

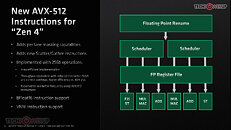

Each of the 5 nm CPU complex dies (CCDs) is physically identical to the ones you find in Ryzen 7000-series "Raphael" desktop processors. It packs 8 "Zen 4" CPU cores, each with 1 MB of dedicated L2 cache, and 32 MB of L3 cache shared among the 8 cores. Each "Zen 4" core provides a 14% generational performance uplift compared to "Zen 3," with clock-speed kept constant. Much of this uplift comes from updates to the core's Front-end and Load/store unit, while the branch predictor, larger L2 cache, and execution engine, make smaller contributions. The biggest generational change is the ISA, which sees the introduction of support for the AVX-512 instruction-set, VNNI, and bfloat16. The new instruction sets should accelerate AVX-512 math workloads, as well as accelerate performance with AI applications. AMD says that its AVX-512 implementation is more die-efficient compared to Intel's, as it is using existing 256-bit wide FPU in a double-pumped fashion to enable 512-bit operations.

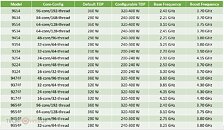

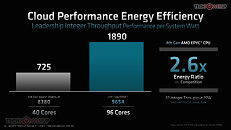

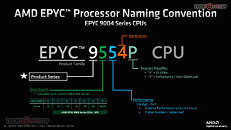

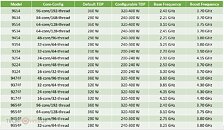

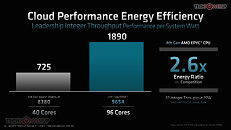

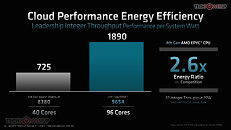

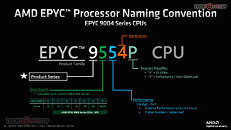

AMD is launching a total of 18 processor SKUs today, all meant for the Socket SP5 platform. It follows the nomenclature as described in the slide below. EPYC is the top-level brand, "9" is the product series. The next digit indicates core-count, with "0" denoting 8 cores, "1" denoting 16, "2" denoting 24, "3" denoting 32, "4" denoting 48, "5" being 64, and "6" being 84-96. The next digit denotes performance on a 1-10 scale. The last digit is actually a character, which could either be "P" or "F," with P denoting 2P-capable SKUs, and "F" denoting special SKUs that focus on fewer cores per CCD to improve per-core performance. The configurable TDP of all SKUs is rated up to 400 W, which seems high, but one should take into account the CPU core-count, and the impact it has on the number of server blades per rack. This is one of the reason AMD isn't scaling beyond 2 sockets per server. The company's core-density translates into 67% fewer servers, 52% less power.

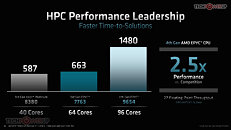

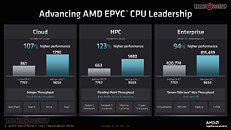

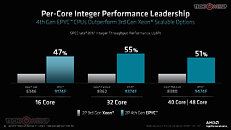

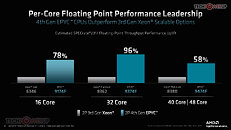

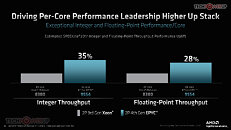

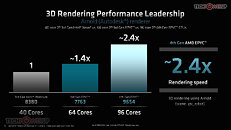

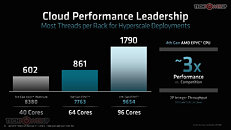

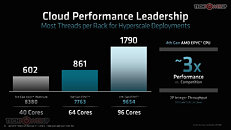

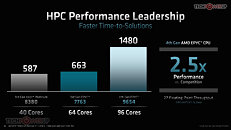

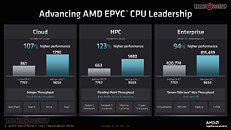

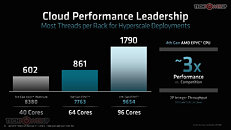

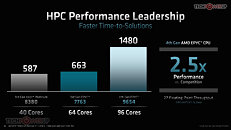

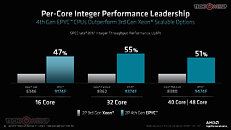

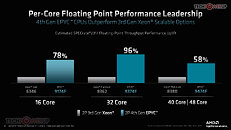

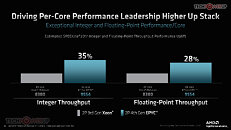

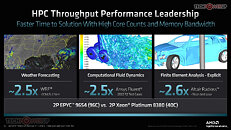

In terms of performance, AMD only has Intel's dated 3rd Gen Xeon Scalable "Ice Lake-SP" processors for comparison, since "Sapphire Rapids" is still unreleased. With core-counts equalized, the 16-core EPYC 9174F is shown being 47% faster than the Xeon Gold 6346; the 32-core EPYC 9374F is 55% faster than the Xeon Platinum 8362; and the 48-core EPYC 9474F is 51% faster than the 40-core Xeon Platinum 8380. The same test group also sees 58-96% floating-point performance leadership in favor of AMD.

The complete slide-deck follows.

View at TechPowerUp Main Site

The processors AMD is launching today are the EPYC "Genoa" series, targeting general purpose servers, although they can be deployed in large cloud data-centers, too. To large-scale cloud providers such as AWS, Azure, and Google Cloud, AMD is readying a different class of processor, codenamed "Bergamo," which is plans to launch later. In 2023, the company will launch the "Genoa-X" line of processor for technical-compute and HPC applications, which benefit from large on-die caches, as they feature the 3D Vertical Cache technology. There will also be "Siena," a class of EPYC processors targeting the telecom and edge-computing markets, which could see an integration of more Xilinx IP.

The EPYC "Genoa" processor, as we mentioned, comes in core-counts of up to 96-core/192-thread, dominating the 40-core/80-thread counts of the 3rd Gen Xeon Scalable "Ice Lake-SP," and also staying ahead of the 60-core/120-thread counts of the upcoming Xeon Scalable "Sapphire Rapids." The new AMD processor also sees a significant buff of its I/O capabilities, featuring a 12-channel (24 sub-channel) DDR5 memory interface, and a gargantuan 160-lane PCI-Express Gen 5 interface (that's ten Gen 5 x16 slots running at full bandwidth). and platform support for CXL and 2P xGMI links by subtracting some of those multipurpose lanes.

The new 6 nm server I/O die (sIOD) has a significantly higher transistor count than the 12 nm one powering past-gen EPYC processors. The high transistor count is due to two large 80-lane configurable SERDES (serializer-deserializer) components, which can be made to put out PCIe Gen 5 lanes, CXL 1.1 lanes, SATA 6 Gbps ports, or even the inter-socket Infinity Fabric enabling 2P platforms. The processor supports up to 64 CXL 1.1 lanes that can be used to connect to networked memory-pooling devices. 3rd generation Infinity Fabric connects the various components inside the sIOD, the sIOD to the twelve "Zen 4" CCDs via IFOP, and as an inter-socket interconnect. The processor features a 12-channel (24 x 40-bit sub-channels) memory interface, which supports up to 6 TB of ECC DDR5-4800 memory per socket. The latest generation Secure Processor provides SEV-SNP (secure nested paging), and AES-256-XTS, for a larger number of secure VMs.

Each of the 5 nm CPU complex dies (CCDs) is physically identical to the ones you find in Ryzen 7000-series "Raphael" desktop processors. It packs 8 "Zen 4" CPU cores, each with 1 MB of dedicated L2 cache, and 32 MB of L3 cache shared among the 8 cores. Each "Zen 4" core provides a 14% generational performance uplift compared to "Zen 3," with clock-speed kept constant. Much of this uplift comes from updates to the core's Front-end and Load/store unit, while the branch predictor, larger L2 cache, and execution engine, make smaller contributions. The biggest generational change is the ISA, which sees the introduction of support for the AVX-512 instruction-set, VNNI, and bfloat16. The new instruction sets should accelerate AVX-512 math workloads, as well as accelerate performance with AI applications. AMD says that its AVX-512 implementation is more die-efficient compared to Intel's, as it is using existing 256-bit wide FPU in a double-pumped fashion to enable 512-bit operations.

AMD is launching a total of 18 processor SKUs today, all meant for the Socket SP5 platform. It follows the nomenclature as described in the slide below. EPYC is the top-level brand, "9" is the product series. The next digit indicates core-count, with "0" denoting 8 cores, "1" denoting 16, "2" denoting 24, "3" denoting 32, "4" denoting 48, "5" being 64, and "6" being 84-96. The next digit denotes performance on a 1-10 scale. The last digit is actually a character, which could either be "P" or "F," with P denoting 2P-capable SKUs, and "F" denoting special SKUs that focus on fewer cores per CCD to improve per-core performance. The configurable TDP of all SKUs is rated up to 400 W, which seems high, but one should take into account the CPU core-count, and the impact it has on the number of server blades per rack. This is one of the reason AMD isn't scaling beyond 2 sockets per server. The company's core-density translates into 67% fewer servers, 52% less power.

In terms of performance, AMD only has Intel's dated 3rd Gen Xeon Scalable "Ice Lake-SP" processors for comparison, since "Sapphire Rapids" is still unreleased. With core-counts equalized, the 16-core EPYC 9174F is shown being 47% faster than the Xeon Gold 6346; the 32-core EPYC 9374F is 55% faster than the Xeon Platinum 8362; and the 48-core EPYC 9474F is 51% faster than the 40-core Xeon Platinum 8380. The same test group also sees 58-96% floating-point performance leadership in favor of AMD.

The complete slide-deck follows.

View at TechPowerUp Main Site