My issue is more with boards like this

Lasting Quality from GIGABYTE.GIGABYTE Ultra Durable™ motherboards bring together a unique blend of features and technologies that offer users the absolute ultimate platform fo...

www.gigabyte.com

And this

Lasting Quality from GIGABYTE.GIGABYTE Ultra Durable™ motherboards bring together a unique blend of features and technologies that offer users the absolute ...

www.gigabyte.com

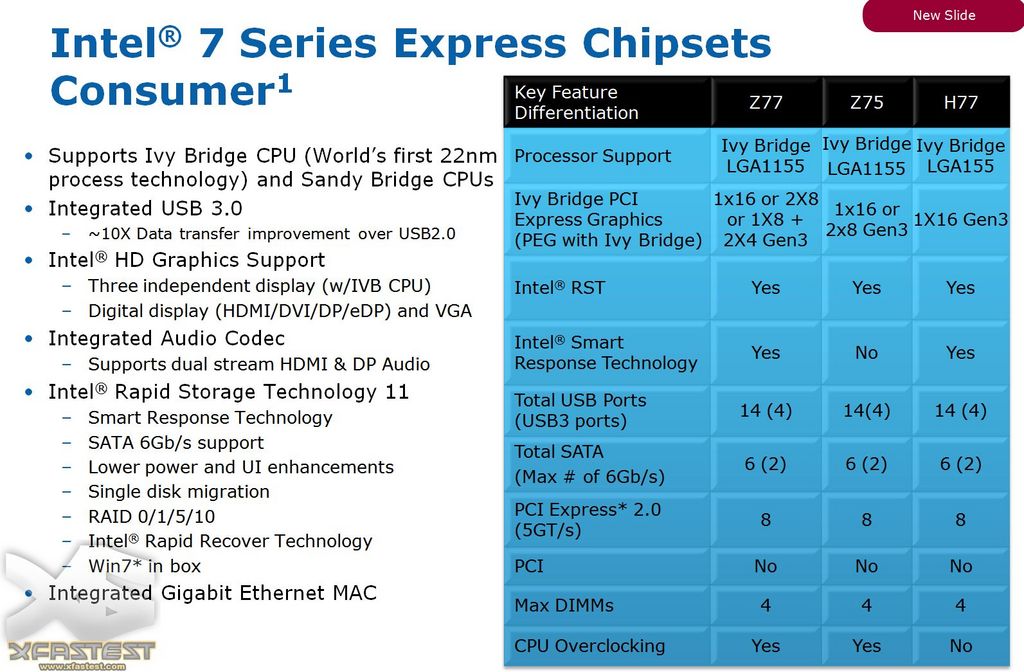

Once the manufacturers are trying to make high-end tiers with mid-range chipsets, it just doesn't add up.

Both of those boards end up "limiting" the GPU slot to x8 (as you already pointed out), as they need to borrow the other eight lanes or you can't use half of the features on the boards.

For consumers that aren't aware of this and now maybe end up pairing that with an APU, are going to be in for a rude awakening where the CPU doesn't have enough PCIe lanes to enable some features that the board has. It's even worse in these cases, as Gigabyte didn't provide a block diagram, so it's not really clear of what interfaces are shared.

This might not be the chipset vendors fault as such, but 10 PCIe lanes is not enough in these examples. It would be fine on most mATX or mini-ITX boards though.

We're already starting to see high-end boards with four M.2 slots (did Asus have one with five even?) and it seems to be the storage interface of the foreseeable future when it comes to desktop PCs as U.2 never happened in the desktop space.

I think we kind of already have the x90 for AMD, but that changes the socket and moves you to HEDT...

My issue is more that the gap between the current high-end and current mid-range is a little bit too wide, but maybe we'll see that fixed next generation. At least the B550 is an improvement on B450.

Judging by this news post, we're looking at 24 usable PCIe lanes from the CPU, so the USB4 ones might be allocated to NVMe duty on cheaper boards, depending the cost of USB4 host controllers. Obviously this is still PCIe 4.0, but I guess some of those lanes are likely to changed to PCIe 5.0 at some point.

Although we've only seen the Z690 chipset from Intel, it seems like they're kind of going down the route you're suggesting, since they have PCIe 5.0 in the CPU, as well as PCIe 4.0, both for an SSD and the DMI interface, but then have PCIe 4.0 and PCIe 3.0 in the chipset.

PCIe 3.0 is still more than fast enough for the kind of Wi-Fi solutions we get, since it appears no-one is really doing 3x3 cards any more. It's obviously still good enough for almost anything you can slot in, apart from 10Gbps Ethernet (if we assume x1 interface here) and high-end SSDs, but there's little else in a consumer PC that can even begin to take advantage of a faster interface right now. As we've seen from the regular PCIe graphics card bandwidth tests done here, PCIe 4.0 is only just about making a difference on the very highest-end of cards and barely that. Maybe this will change when we get to MCM type GPUs, but who knows.

Anyhow, I think we more or less agree on this and hopefully this is something AMD and Intel also figures out, instead of making differentiators that lock out entire feature sets just to upsell to a much higher tier.

Edit: Just spotted this, which suggest AMD will have AM5 CPU SKUs with 20 or 28 PCIe lanes in total, in addition to the fact that those three SKUs will have an integrated GPU as well.

AMD to offer integrated on-chip graphics for next-gen Ryzen series The Next-generation of AMD Ryzen CPUs is now confirmed to offer integrated GPUs. Upcoming Ryzen CPU series based on Zen4 architecture will all offer integrated GPU by design. While this does not mean that literally all CPUs will...

videocardz.com

That's exactly the point: as signal buses increase in bandwidth, they become more sensitive to interference and signal degradation, requiring both much tighter trace routing as well as better shielding, less signal path resistance/impedance, and ultimately ancillary components such as redrivers and retimers are necessary for any kind of reasonable signal length.

That's exactly the point: as signal buses increase in bandwidth, they become more sensitive to interference and signal degradation, requiring both much tighter trace routing as well as better shielding, less signal path resistance/impedance, and ultimately ancillary components such as redrivers and retimers are necessary for any kind of reasonable signal length.