Cerebras Launches the World's Fastest AI Inference

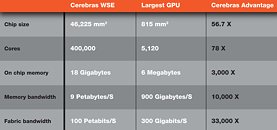

Today, Cerebras Systems, the pioneer in high performance AI compute, announced Cerebras Inference, the fastest AI inference solution in the world. Delivering 1,800 tokens per second for Llama3.1 8B and 450 tokens per second for Llama3.1 70B, Cerebras Inference is 20 times faster than NVIDIA GPU-based solutions in hyperscale clouds. Starting at just 10c per million tokens, Cerebras Inference is priced at a fraction of GPU solutions, providing 100x higher price-performance for AI workloads.

Unlike alternative approaches that compromise accuracy for performance, Cerebras offers the fastest performance while maintaining state of the art accuracy by staying in the 16-bit domain for the entire inference run. Cerebras Inference is priced at a fraction of GPU-based competitors, with pay-as-you-go pricing of 10 cents per million tokens for Llama 3.1 8B and 60 cents per million tokens for Llama 3.1 70B.

Unlike alternative approaches that compromise accuracy for performance, Cerebras offers the fastest performance while maintaining state of the art accuracy by staying in the 16-bit domain for the entire inference run. Cerebras Inference is priced at a fraction of GPU-based competitors, with pay-as-you-go pricing of 10 cents per million tokens for Llama 3.1 8B and 60 cents per million tokens for Llama 3.1 70B.