Apr 4th, 2025 21:15 EDT

change timezone

Latest GPU Drivers

New Forum Posts

- [Intel AX1xx/AX2xx/AX4xx/AX16xx/BE2xx/BE17xx] Intel Modded Wi-Fi Driver with Intel® Killer™ Features (301)

- New posts added to last post (4)

- Comet Lake vs Rocket Lake Lga1200. (8)

- I have an idea for cooling 1kW GPU power. (20)

- What's your latest tech purchase? (23476)

- Will I need an PSU upgrade (33)

- The coffee and tea drinkers club. (232)

- I tried to use AMD Auto Overclock, and now my PC has been freezing up sometimes. Afterwards, the screen goes black or displays artifacts. (3)

- Recommended PhysX card for 5xxx series? [Is vRAM relevant?] (229)

- TPU's Nostalgic Hardware Club (20168)

Popular Reviews

- DDR5 CUDIMM Explained & Benched - The New Memory Standard

- PowerColor Radeon RX 9070 Hellhound Review

- Corsair RM750x Shift 750 W Review

- ASUS Prime X870-P Wi-Fi Review

- Sapphire Radeon RX 9070 XT Pulse Review

- Pwnage Trinity CF Review

- Upcoming Hardware Launches 2025 (Updated Apr 2025)

- Sapphire Radeon RX 9070 XT Nitro+ Review - Beating NVIDIA

- AMD Ryzen 7 9800X3D Review - The Best Gaming Processor

- Palit GeForce RTX 5070 GamingPro OC Review

Controversial News Posts

- MSI Doesn't Plan Radeon RX 9000 Series GPUs, Skips AMD RDNA 4 Generation Entirely (146)

- Microsoft Introduces Copilot for Gaming (124)

- AMD Radeon RX 9070 XT Reportedly Outperforms RTX 5080 Through Undervolting (119)

- NVIDIA Reportedly Prepares GeForce RTX 5060 and RTX 5060 Ti Unveil Tomorrow (115)

- Over 200,000 Sold Radeon RX 9070 and RX 9070 XT GPUs? AMD Says No Number was Given (100)

- NVIDIA GeForce RTX 5050, RTX 5060, and RTX 5060 Ti Specifications Leak (96)

- Nintendo Switch 2 Launches June 5 at $449.99 with New Hardware and Games (90)

- Retailers Anticipate Increased Radeon RX 9070 Series Prices, After Initial Shipments of "MSRP" Models (90)

News Posts matching #Instinct MI100

Return to Keyword BrowsingU.S. Government Restricts Export of AI Compute GPUs to China and Russia (Affects NVIDIA, AMD, and Others)

The U.S. Government has imposed restrictions on the export of AI compute GPUs to China and Russia without Government-authorization in the form of a waiver or a license. This impacts sales of products such as the NVIDIA A100, H100; AMD Instinct MI100, MI200; and the upcoming Intel "Ponte Vecchio," among others. The restrictions came to light when NVIDIA on Wednesday disclosed that it has received a Government notification about licensing requirements for export of its AI compute GPUs to Russia and China.

The notification doesn't specify the A100 and H100 by name, but defines AI inference performance thresholds to meet the licensing requirements. The Government wouldn't single out NVIDIA, and so competing products such as the AMD MI200 and the upcoming Intel Xe-HP "Ponte Vecchio" would fall within these restrictions. For NVIDIA, this is impacts $400 million in TAM, unless the Government licenses specific Russian and Chinese customers to purchase these GPUs from NVIDIA. Such trade restrictions usually come with riders to prevent resale or transshipment by companies outside the restricted region (eg: a distributor in a third waived country importing these chips in bulk and reselling them to these countries).

The notification doesn't specify the A100 and H100 by name, but defines AI inference performance thresholds to meet the licensing requirements. The Government wouldn't single out NVIDIA, and so competing products such as the AMD MI200 and the upcoming Intel Xe-HP "Ponte Vecchio" would fall within these restrictions. For NVIDIA, this is impacts $400 million in TAM, unless the Government licenses specific Russian and Chinese customers to purchase these GPUs from NVIDIA. Such trade restrictions usually come with riders to prevent resale or transshipment by companies outside the restricted region (eg: a distributor in a third waived country importing these chips in bulk and reselling them to these countries).

AMD Announces CDNA Architecture. Radeon MI100 is the World's Fastest HPC Accelerator

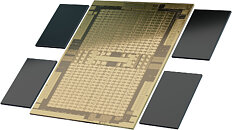

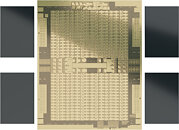

AMD today announced the new AMD Instinct MI100 accelerator - the world's fastest HPC GPU and the first x86 server GPU to surpass the 10 teraflops (FP64) performance barrier. Supported by new accelerated compute platforms from Dell, Gigabyte, HPE, and Supermicro, the MI100, combined with AMD EPYC CPUs and the ROCm 4.0 open software platform, is designed to propel new discoveries ahead of the exascale era.

Built on the new AMD CDNA architecture, the AMD Instinct MI100 GPU enables a new class of accelerated systems for HPC and AI when paired with 2nd Gen AMD EPYC processors. The MI100 offers up to 11.5 TFLOPS of peak FP64 performance for HPC and up to 46.1 TFLOPS peak FP32 Matrix performance for AI and machine learning workloads. With new AMD Matrix Core technology, the MI100 also delivers a nearly 7x boost in FP16 theoretical peak floating point performance for AI training workloads compared to AMD's prior generation accelerators.

Built on the new AMD CDNA architecture, the AMD Instinct MI100 GPU enables a new class of accelerated systems for HPC and AI when paired with 2nd Gen AMD EPYC processors. The MI100 offers up to 11.5 TFLOPS of peak FP64 performance for HPC and up to 46.1 TFLOPS peak FP32 Matrix performance for AI and machine learning workloads. With new AMD Matrix Core technology, the MI100 also delivers a nearly 7x boost in FP16 theoretical peak floating point performance for AI training workloads compared to AMD's prior generation accelerators.

AMD Eyes Mid-November CDNA Debut with Instinct MI100, "World's Fastest FP64 Accelerator"

AMD is eyeing a mid-November debut for its CDNA compute architecture with the Instinct MI100 compute accelerator card. CDNA is a fork of RDNA for headless GPU compute accelerators with large SIMD resources. An Aroged report pins the launch of the MI100 at November 16, 2020, according to leaked AMD documents it dug up. The Instinct MI100 will eye a slice of the same machine intelligence pie NVIDIA is seeking to dominate with its A100 Tensor Core compute accelerator.

It appears like the first MI100 cards will be built in the add-in-board form-factor with PCI-Express 4.0 x16 interfaces, although older reports do predict AMD creating a socketed variant of its Infinity Fabric interconnect for machines with larger numbers of these compute processors. In the leaked document, AMD claims that the Instinct MI100 is the "world's highest double-precision accelerator for machine learning, HPC, cloud compute, and rendering systems." This is an especially big claim given that the A100 Tensor Core features FP64 CUDA cores based on the "Ampere" architecture. Then again, given that AMD claims that the RDNA2 graphics architecture is clawing back at NVIDIA with performance at the high-end, the competitiveness of the Instinct MI100 against the A100 Tensor Core cannot be discounted.

It appears like the first MI100 cards will be built in the add-in-board form-factor with PCI-Express 4.0 x16 interfaces, although older reports do predict AMD creating a socketed variant of its Infinity Fabric interconnect for machines with larger numbers of these compute processors. In the leaked document, AMD claims that the Instinct MI100 is the "world's highest double-precision accelerator for machine learning, HPC, cloud compute, and rendering systems." This is an especially big claim given that the A100 Tensor Core features FP64 CUDA cores based on the "Ampere" architecture. Then again, given that AMD claims that the RDNA2 graphics architecture is clawing back at NVIDIA with performance at the high-end, the competitiveness of the Instinct MI100 against the A100 Tensor Core cannot be discounted.

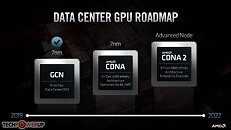

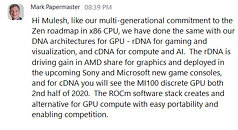

AMD Confirms CDNA-Based Radeon Instinct MI100 Coming to HPC Workloads in 2H2020

Mark Papermaster, chief technology officer and executive vice president of Technology and Engineering at AMD, today confirmed that CDNA is on-track for release in 2H2020 for HPC computing. The confirmation was (adequately) given during Dell's EMC High-Performance Computing Online event. This confirms that AMD is looking at a busy 2nd half of the year, with both Zen 3, RDNA 2 and CDNA product lines being pushed to market.

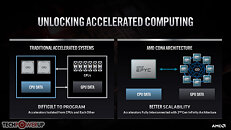

CDNA is AMD's next push into the highly-lucrative HPC market, and will see the company differentiating their GPU architectures through market-based product differentiation. CDNA will see raster graphics hardware, display and multimedia engines, and other associated components being removed from the chip design in a bid to recoup die area for both increased processing units as well as fixed-function tensor compute hardware. CNDA-based Radeon Instinct MI100 will be fabricated under TSMC's 7 nm node, and will be the first AMD architecture featuring shared memory pools between CPUs and GPUs via the 2nd gen Infinity Fabric, which should bring about both throughput and power consumption improvements to the platform.

CDNA is AMD's next push into the highly-lucrative HPC market, and will see the company differentiating their GPU architectures through market-based product differentiation. CDNA will see raster graphics hardware, display and multimedia engines, and other associated components being removed from the chip design in a bid to recoup die area for both increased processing units as well as fixed-function tensor compute hardware. CNDA-based Radeon Instinct MI100 will be fabricated under TSMC's 7 nm node, and will be the first AMD architecture featuring shared memory pools between CPUs and GPUs via the 2nd gen Infinity Fabric, which should bring about both throughput and power consumption improvements to the platform.

Apr 4th, 2025 21:15 EDT

change timezone

Latest GPU Drivers

New Forum Posts

- [Intel AX1xx/AX2xx/AX4xx/AX16xx/BE2xx/BE17xx] Intel Modded Wi-Fi Driver with Intel® Killer™ Features (301)

- New posts added to last post (4)

- Comet Lake vs Rocket Lake Lga1200. (8)

- I have an idea for cooling 1kW GPU power. (20)

- What's your latest tech purchase? (23476)

- Will I need an PSU upgrade (33)

- The coffee and tea drinkers club. (232)

- I tried to use AMD Auto Overclock, and now my PC has been freezing up sometimes. Afterwards, the screen goes black or displays artifacts. (3)

- Recommended PhysX card for 5xxx series? [Is vRAM relevant?] (229)

- TPU's Nostalgic Hardware Club (20168)

Popular Reviews

- DDR5 CUDIMM Explained & Benched - The New Memory Standard

- PowerColor Radeon RX 9070 Hellhound Review

- Corsair RM750x Shift 750 W Review

- ASUS Prime X870-P Wi-Fi Review

- Sapphire Radeon RX 9070 XT Pulse Review

- Pwnage Trinity CF Review

- Upcoming Hardware Launches 2025 (Updated Apr 2025)

- Sapphire Radeon RX 9070 XT Nitro+ Review - Beating NVIDIA

- AMD Ryzen 7 9800X3D Review - The Best Gaming Processor

- Palit GeForce RTX 5070 GamingPro OC Review

Controversial News Posts

- MSI Doesn't Plan Radeon RX 9000 Series GPUs, Skips AMD RDNA 4 Generation Entirely (146)

- Microsoft Introduces Copilot for Gaming (124)

- AMD Radeon RX 9070 XT Reportedly Outperforms RTX 5080 Through Undervolting (119)

- NVIDIA Reportedly Prepares GeForce RTX 5060 and RTX 5060 Ti Unveil Tomorrow (115)

- Over 200,000 Sold Radeon RX 9070 and RX 9070 XT GPUs? AMD Says No Number was Given (100)

- NVIDIA GeForce RTX 5050, RTX 5060, and RTX 5060 Ti Specifications Leak (96)

- Nintendo Switch 2 Launches June 5 at $449.99 with New Hardware and Games (90)

- Retailers Anticipate Increased Radeon RX 9070 Series Prices, After Initial Shipments of "MSRP" Models (90)