Apr 3rd, 2025 20:50 EDT

change timezone

Latest GPU Drivers

New Forum Posts

- 9800x3d for 1440p gaming? (2)

- I tried to use AMD Auto Overclock, and now my PC has been freezing up sometimes. Afterwards, the screen goes black or displays artifacts. (2)

- A dozen drivers for HD4670, and which do I choose? (0)

- Help me pick a UPS (53)

- Mllse 6600s that are locked at 500 mhz. (4)

- Someone knowledable on memory voltages want to chime in? (also what is PMIC and should I be worried) (30)

- Help me decide if I should buy the arc B580 (As a backup) (12)

- Post your CrystalDiskMark speeds (617)

- New AM5 build [help] (21)

- RX 9000 series GPU Owners Club (126)

Popular Reviews

- DDR5 CUDIMM Explained & Benched - The New Memory Standard

- PowerColor Radeon RX 9070 Hellhound Review

- Sapphire Radeon RX 9070 XT Pulse Review

- Pwnage Trinity CF Review

- Sapphire Radeon RX 9070 XT Nitro+ Review - Beating NVIDIA

- Corsair RM750x Shift 750 W Review

- SilverStone Lucid 04 Review

- Upcoming Hardware Launches 2025 (Updated Apr 2025)

- Palit GeForce RTX 5070 GamingPro OC Review

- AMD Ryzen 7 9800X3D Review - The Best Gaming Processor

Controversial News Posts

- MSI Doesn't Plan Radeon RX 9000 Series GPUs, Skips AMD RDNA 4 Generation Entirely (146)

- Microsoft Introduces Copilot for Gaming (124)

- AMD Radeon RX 9070 XT Reportedly Outperforms RTX 5080 Through Undervolting (119)

- NVIDIA Reportedly Prepares GeForce RTX 5060 and RTX 5060 Ti Unveil Tomorrow (115)

- Over 200,000 Sold Radeon RX 9070 and RX 9070 XT GPUs? AMD Says No Number was Given (100)

- NVIDIA GeForce RTX 5050, RTX 5060, and RTX 5060 Ti Specifications Leak (96)

- Retailers Anticipate Increased Radeon RX 9070 Series Prices, After Initial Shipments of "MSRP" Models (90)

- Nintendo Switch 2 Launches June 5 at $449.99 with New Hardware and Games (88)

Friday, September 9th 2016

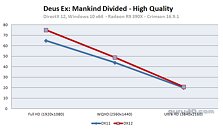

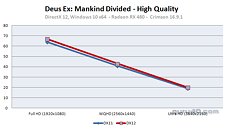

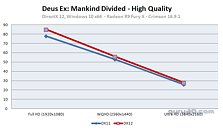

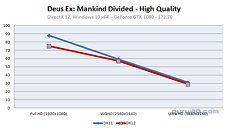

AMD GPUs See Lesser Performance Drop on "Deus Ex: Mankind Divided" DirectX 12

Deus Ex: Mankind Divided is the latest AAA title to support DirectX 12, with its developer Eidos deploying a DirectX 12 renderer weeks after its release, through a patch. Guru3D put the DirectX 12 version of the game through five GPU architectures, AMD "Polaris," GCN 1.1, GCN 1.2, NVIDIA "Pascal," and NVIDIA "Maxwell," through Radeon RX 480, Radeon R9 Fury X, Radeon R9 390X, GeForce GTX 1080, GeForce GTX 1060, and GeForce GTX 980. The AMD GPUs were driven by RSCE 16.9.1 drivers, and NVIDIA by GeForce 372.70.

Looking at the graphs, switching from DirectX 11 to DirectX 12 mode, AMD GPUs not only don't lose frame-rates, but in some cases, even gain frame-rates. NVIDIA GPUs, on the other hand, significantly lose frame-rates. AMD GPUs tend to hold on to their frame-rates at 4K Ultra HD, marginally gain frame-rates at 2560 x 1440, and further gain frame-rates at 1080p. NVIDIA GPUs either barely hold on to their frame-rates, or significantly lose them. AMD has on multiple occasions claimed that its Graphics CoreNext architecture, combined with its purist approach to asynchronous compute make Radeon GPUs a better choice for DirectX 12 and Vulkan. Find more fascinating findings by Guru3D here.More graphs follow.

Looking at the graphs, switching from DirectX 11 to DirectX 12 mode, AMD GPUs not only don't lose frame-rates, but in some cases, even gain frame-rates. NVIDIA GPUs, on the other hand, significantly lose frame-rates. AMD GPUs tend to hold on to their frame-rates at 4K Ultra HD, marginally gain frame-rates at 2560 x 1440, and further gain frame-rates at 1080p. NVIDIA GPUs either barely hold on to their frame-rates, or significantly lose them. AMD has on multiple occasions claimed that its Graphics CoreNext architecture, combined with its purist approach to asynchronous compute make Radeon GPUs a better choice for DirectX 12 and Vulkan. Find more fascinating findings by Guru3D here.More graphs follow.

Apr 3rd, 2025 20:50 EDT

change timezone

Latest GPU Drivers

New Forum Posts

- 9800x3d for 1440p gaming? (2)

- I tried to use AMD Auto Overclock, and now my PC has been freezing up sometimes. Afterwards, the screen goes black or displays artifacts. (2)

- A dozen drivers for HD4670, and which do I choose? (0)

- Help me pick a UPS (53)

- Mllse 6600s that are locked at 500 mhz. (4)

- Someone knowledable on memory voltages want to chime in? (also what is PMIC and should I be worried) (30)

- Help me decide if I should buy the arc B580 (As a backup) (12)

- Post your CrystalDiskMark speeds (617)

- New AM5 build [help] (21)

- RX 9000 series GPU Owners Club (126)

Popular Reviews

- DDR5 CUDIMM Explained & Benched - The New Memory Standard

- PowerColor Radeon RX 9070 Hellhound Review

- Sapphire Radeon RX 9070 XT Pulse Review

- Pwnage Trinity CF Review

- Sapphire Radeon RX 9070 XT Nitro+ Review - Beating NVIDIA

- Corsair RM750x Shift 750 W Review

- SilverStone Lucid 04 Review

- Upcoming Hardware Launches 2025 (Updated Apr 2025)

- Palit GeForce RTX 5070 GamingPro OC Review

- AMD Ryzen 7 9800X3D Review - The Best Gaming Processor

Controversial News Posts

- MSI Doesn't Plan Radeon RX 9000 Series GPUs, Skips AMD RDNA 4 Generation Entirely (146)

- Microsoft Introduces Copilot for Gaming (124)

- AMD Radeon RX 9070 XT Reportedly Outperforms RTX 5080 Through Undervolting (119)

- NVIDIA Reportedly Prepares GeForce RTX 5060 and RTX 5060 Ti Unveil Tomorrow (115)

- Over 200,000 Sold Radeon RX 9070 and RX 9070 XT GPUs? AMD Says No Number was Given (100)

- NVIDIA GeForce RTX 5050, RTX 5060, and RTX 5060 Ti Specifications Leak (96)

- Retailers Anticipate Increased Radeon RX 9070 Series Prices, After Initial Shipments of "MSRP" Models (90)

- Nintendo Switch 2 Launches June 5 at $449.99 with New Hardware and Games (88)

114 Comments on AMD GPUs See Lesser Performance Drop on "Deus Ex: Mankind Divided" DirectX 12

And FYI, CUDA and Stream have existed for longer, and OpenCL for about the same amount of time

Just imagine this:

We have the card A, card A gives us X fps with the Y visual effects on a game.

When the card A and every next generation of this card is able to draw more fps from the same game, or scene, what do we(us programmers) do? WE MAKE BETTER VISUAL EFFECTS.

That's what has been going on since the 80s, not only in graphics, Processors were able to handle more, the software got better and better for the end user in each aspect.

If you make ZERO, that's 0, progress in your APIs, μArch, OS, e.t.c. then you get 0 better visuals back.

What is going to happen in the future? well that's easy to predict, game companies will see that they cannot squeeze more complicated graphics in the games and they will keep them the same for every generation of the games.

...and that's what nVidia is being doing the last decade. Nvidia shits on every gamers' face with their libraries because that's what they can handle and they do not let studios' innovations surface the market.

Do you remember what happened with Crysis series? It was marvelous that we had a studio push the hardware that much and push the companies to make beastier gpus.

Do you remember what happen with every Gameworks title? same visuals, only forced you to jump to a newer generation of nVidia's cards because they crippled their older gpus.

...but consumers are stupid and they deserve get stolen by such A-holes like nVidia.

That my "friends" is called evolution and a step forward in the technology, but some people think it's not much because they have to justify their 500$ or € purchase, not only by BSing themselves, but spreading the S*** all over the world.

The huge memory use may be a factor in that the monstrous textures are taking an awful lot of processing grunt to shift. API or not, I think running near 6GB on 1440p shows how much is being rendered. That takes power from a hefty chip. New FX may have to wait as devs keep creating more 'on screen' visuals which consume the chips capacity.

I don't know though, it seems if we want photo realistic, processing power with far higher Tflops is required. Maybe Pascal Titan X points in that direction with its 'rubbish' Async but enormous power.

I still remember the moment when square enix showed the DX 12 demo :

The irony is that Nvidia doesn't seem to get any benefit from DX12 : they have either same, or negative performance. Even the gain on amd gpu isn't really ashtonishing:

www.techspot.com/review/1081-dx11-vs-dx12-ashes/page3.html

Getting low level optimisation on a compute monster like the Fury X is only worth 3 fps ?

I remember a time were gpu makers had developers making public realeased technical demo to show what their Gpu could do. They keep blabering about DX12, showing numbers on slides that looks impressive, but right now we don't have a single example of what you can expect of DX 12 on a single High-End gpu.

Vulkan is at the moment the api who got the more impressive results, but if the story of open gl repeat itself, I fear this isn't going to matter that much, as the studios will keep giving DX12 the priority.

So yhea, DX12 is at least giving you a great bump if you got a weak cpu, but it that really it ? Was that really worth making so much noise ? Right now gaming developpers are not giving me any reason to get hyped about DX 12 benefits. Will they be any project ambitious, and crazy enough to make people make ask : "Can it run XXX tho ?" while using of the benefits of a low level api ?

Deus Ex MD looks nice, but it's not "out of this world" nice. I'm not seeing a huge gap between it and Tomb Raider 2015, DOOM, or even TW3.

i totally agree with what you said, i had the same feeling when i first heard about vulkan/dx12. if Nvidia is not careful they could lose a big chunk of the market in the next one to two gens of cards, which imo would be something positive for the consumer since i think that the gpu market has been lacking serious competition for some years now.

Though I hope you're right for the price drops that need to happen for all consumers, in the age of Likes, Views and Trending, mindshare is the real metric that maintains a marketshare's status quo and Nvidia is throwing too much TWIMTBP money around for that to change any time soon.

The 1080 issue was mentioned as acknowledge and under investigation. After they are done, probably will behave like the 1060 numbers.

If the game is poorly optimized (or not), or does use more complex scenery than other games it doesn't matter as much as the actual card performance curve, specially if you educate enough and look not only at APIs, but at the real source, which is explained in detail here

And please stop putting words like "fanboy" on your comments. It makes you look more and more like one. ;)

And currently Microsoft wants W10 adoption and DX12 is a part of that (and has been since release a year ago). And majority of gamers are on W10 in a year.

And no matter how well you optimize there is a limit in how much a hardware can do. While probably like cars, those numbers can be taken with a kind of grain of salt in terms that manufactures may inflate them, if you look at given numbers, Nvidia's are higher... Period.

techreport.com/review/30639/examining-early-directx-12-performance-in-deus-ex-mankind-divided/3

i agree nvidias dx11 drivers are very efficient. amd still out perform them in cases.. like 2x480 can out perform a 1080 in dx12 on more than 1 game. there is more too it than drivers alone, the amd crads are faster. Although i do agree that they gain more in dx12 % wize mostly due to drivers. The hardware archetecture of amd cards have been aimed towards a low lvl api since the hd 7750, Nvidia still havent bothered with it yet.. And given we wont be expecting dx12 to be come mainstream for games for atleast a nother year. i think nvidia did the right call. because they can have a new gen of gpu out just at the time dx12 becomes main stream.. obviously this makes the 10 sereise gpu's utterly pointless. But thats not an issue for nvidia they sold the things now, and next gen they can convince people they really need to upgrade to fully benifit low lvl api.