Thursday, May 3rd 2018

AMD & Intel Roadmaps for 2018 Leaked

Bluechip computer, a German IT distribution company, has inadvertently spilled the beans on AMD and Intel's plans for the remainder of this year, shedding some more light on a number of products whose existence was still somewhat marred in fog. The information comes straight from a webinar Bluechip presented to its industry partners - a 30-minute presentation which made its way to YouTube.

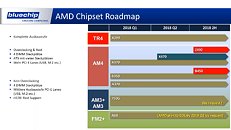

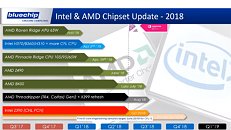

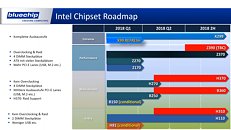

The information gleaned is a confirmation, of sorts, of AMD's planned launch of their Z490 platform in June; the B450 chipset coming a little bit later, in July (an expected product, in every sense); and AMD's second-gen Threadripper, a known-quantity already, which should accompany a X399 platform refresh.On Intel's side of the camp, some ill-kept secrets have apparently been confirmed: the launch of the company's Z390 chipset, for instance, is expected to happen in Q3 2018 - some time after Computex, which might mean a relatively sparse landscape when it comes to teases and new product announcements based on Intel's upcoming top of the line chipset. Oh, andthat unicorn of an 8-core Coffee Lake part is apparently being prepped for 4Q 2018, with silicon being moved to partner hands as early as June.

Sources:

AnandTech, Bluechip Webinar (since removed)

The information gleaned is a confirmation, of sorts, of AMD's planned launch of their Z490 platform in June; the B450 chipset coming a little bit later, in July (an expected product, in every sense); and AMD's second-gen Threadripper, a known-quantity already, which should accompany a X399 platform refresh.On Intel's side of the camp, some ill-kept secrets have apparently been confirmed: the launch of the company's Z390 chipset, for instance, is expected to happen in Q3 2018 - some time after Computex, which might mean a relatively sparse landscape when it comes to teases and new product announcements based on Intel's upcoming top of the line chipset. Oh, andthat unicorn of an 8-core Coffee Lake part is apparently being prepped for 4Q 2018, with silicon being moved to partner hands as early as June.

53 Comments on AMD & Intel Roadmaps for 2018 Leaked

You seem to have a very selective memory. Those are just off the top of my head, I'm sure I'm missing more.

I run both Intel and AMD stuff here so I'm not a fanboy of either make, current DD rig is a 7700K based setup and I'm perfectly happy with it.

As for another comment about AMD always being and only have been a copycat, that's just wrong.

AMD has led more than once before, one such instance was what was called the Itanic disaster that had Intel trying to copy what AMD had already succeeded in doing (64 bit CPUs) yet Intel coudn't make it work themselves.

Everything they tried blew up in their faces and had them scrambling like headless chickens to try and figure out HOW 64 bit chips worked.

They finally had to make a deal with AMD to learn how it worked for making these on their own.

That's why for awhile back then (About 2004-2005) AMD was pushing foward with the 64 bit CPU and Intel kept cranking out 32 bit chips and pushing the clocks higher and higher during that time.

After they finally made a deal and learned how it worked Intel used their deep pockets to run away with the performance crown - That's how it went down back in the day.

What serves my needs is what I go for and I don't care who makes it, my main concern is getting what I need for the best price possible.

Unfortunately Intel is always the higher priced stuff and for my needs it doesn't make alot of sense to spend more that you have to.

I do hope based on the roadmap we have some good stuff from both camps coming soon, I see alot of griping about "Nothing New" to come soon and now that we have something of a schedule shown at least we have an idea of when it should be.

BTW speaking of AMD copying, isn't Intel the one currently having problems making 7nm and isn't AMD the one already with "At least" something to show for it?

There's a thread in here somewhere on that.

en.wikipedia.org/wiki/64-bit_computingThis is one is actually true.Minus the ones IBM did, actual first multi core CPU.

www-03.ibm.com/ibm/history/ibm100/us/en/icons/power4/Kentsfield based X32x0 Xeons shipped 7 January 2007, it wasn't until November 19, 2007 that AMD dropped Agena B2 stepping products out and those all had the wonderful TLB bug.Arcades in the 1990's had multiscreen gaming already. AMD may have been the first to brand the tech and distribute it to consumers, but it long since had existed. Example being Sega's F355 Challenge from 1999 which again used 3 28" monitors for the sit-down cockpit version.

en.wikipedia.org/wiki/Multi-monitorThis isn't even worth a source...It is not correct and is based off of AMD marketing. Their iGPU was a trashcan fire, just less of a trashcan fire as Intel's.CorrectI like how you adjusted this VS DX11. Technically the Fermi series of cards is DX12 (feature level 11.0) compliant. So it is still incorrect. The first fully compliant DX12 GPU was Nvidia with Maxwell.

www.extremetech.com/computing/190581-nvidias-ace-in-the-hole-against-amd-maxwell-is-the-first-gpu-with-full-directx-12-supportNo. That would be Intel with the Pentium D. May of 2005, Intel released the Pentium D which took an MCM of two P4's and had them talk across the FSB.

en.wikipedia.org/wiki/Pentium_DYou should check yourself.

No, some super computers had 64 bit integer arithmetic and 64 bit registers but all the CPU stages were not 64-bit. That is a requirement.

In addition from the webpage you linked

"Intel i860[4] development began culminating in a (too late[5] for Windows NT) 1989 release; the i860 had 32-bit integer registers and 32-bit addressing, so it was not a fully 64-bit processor "

So your claim of Intel having a 64 bit processor in 1989 is not correct.

"Minus the ones IBM did, actual first multi core CPU. "

If you consider the power 4 a true dual core, which is very debatable given it shares L2 and L3 cache among all the cores. In addition, the L3 cache has to go through both the fabric and the NC units before it even gets to the processor. Technically speaking this doesn't mean either AMD's or Intel's current definition of "cores".

"Kentsfield based X32x0 Xeons shipped 7 January 2007, it wasn't until November 19, 2007 that AMD dropped Agena B2 stepping products out and those all had the wonderful TLB bug. "

phys.org/news/2006-12-amd-world-native-quad-core-x86.html

"Arcades in the 1990's had multiscreen gaming already. AMD may have been the first to brand the tech and distribute it to consumers, but it long since had existed. Example being Sega's F355 Challenge from 1999 which again used 3 28" monitors for the sit-down cockpit version. "

Off topic. I could care less what they did in arcades. I guess I should have been more specific as you will nitpick. Of course on a PC related article on a PC enthusiast website I meant in relation to PCs. I do not go into boxing forums and say "actually no, I'm the first person to knock out Floyd Mayweather in a professional bout in his home arena, in a video game".

"This isn't even worth a source...It is not correct and is based off of AMD marketing. Their iGPU was a trashcan fire, just less of a trashcan fire as Intel's. "

Here, let me

techterms.com/definition/apu

"I like how you adjusted this VS DX11. Technically the Fermi series of cards is DX12 (feature level 11.0) compliant. So it is still incorrect. The first fully compliant DX12 GPU was Nvidia with Maxwell. "

Not even Pascal has full DX 12 support yet and even worse a portion of the features have to be emulated.

"No. That would be Intel with the Pentium D. May of 2005, Intel released the Pentium D which took an MCM of two P4's and had them talk across the FSB. "

If that's your interpretation of a MCM then technically the IBM Power4 and many other processors quality as well. Go read the link you provided earlier, IBM connected up to four chips over their data fabric. Of course there are serious difference between AMD's implementation and Intel's / IBMs.

I don't know what kind of day your having but nitpicking someone else's post as the superior fact man and failing at it isn't doing anyone any good. I'll admit I'm not correct all the time but I did not deserve a reply in the tone you provided.

And dont confuses people's.

No idea how I'm going to give out 10 points but still, try it

And well, first GPU to 1GHz, first consumer X64 CPU, first CPU wih 1GHz as well, HBM development etc...

Tbh, the only thing AMD is missing, is a bigger backing by third party software developers. AMD is innovating and making new stuff, Nvidia and Intel are just repurposing old stuff and finetuning it.

Just my 2 Pfennig

-TressFX annoucement was in February 2013 and Hairworks was in October 2013

-FreeSync and G-Sync where both announced in the same week in March 2015

So in the first 2 cases you can't really claim that AMD copied NVIDIA, while in the latters i agree with you

I feel like quoting Jack Nicholson from Mars Attacks when he says, "Why can't we all just get along??"

I'm disappear now :) ....

AMD trying to garner additional sales is using a marketing ploy to say a "native quad core" is better than Intel MCM setup. Which absolutely zero performance tests showed. Intel released the first quad core, AMD released the first "native" quad core.So the only real video games are the ones on a pc? Curious. I was actually kind of excited when amd took Matrox's basic tech mainstream.So are you talking about APU's or anything with an iGPU. There was no designation to that in your other post. APU is merely another term AMD used to market a product. If you notice even the substantially better performing Iris based Intel products are not referenced as APU's nor are the new vega (Polaris) based Intel MCM.www.extremetech.com/computing/190581-nvidias-ace-in-the-hole-against-amd-maxwell-is-the-first-gpu-with-full-directx-12-support

According to Microsoft asynchronous compute isn't a requirement to meet DX12 full flag. If memory serves correctly that was an after the fact add on.You are absolutely correct. What's cool is that cpu is also actually one of the first chips to get an L3 cache. Pretty neat little chips. Really shows how IBM paves the way for most of what we see in the market now.AMD took tech that existed and rebranded it then marketed it as their own. Very little as of late has been new. There was no tone attached to anything there was misinformation on the page that needed to be shut down. I see you found a couple nuances in what I posted I enjoy getting corrected information it grows me and the forums having that spread around as opposed to the wrong stuff.

X399 is already "taken" by AMD so Intel can't do a X399.

I'm not really sure how pci-e lanes work (cpu vs chipset and the communication between both) but I know I wish there was room for an extra x4 NVMe, even if it's a pci-e slot drive

We can complain and take jabs at each other all we'd like over who's first at what and why for, based on a roadmap or really any release schedule made or names given to what - Thing is we're not the ones making the decisions about it.

If I had a slot on the board for deciding such things then maybe what I'd say would really matter but I don't so anything I'd have to say doesn't..... And it's the same for all here that I know of.

So... Why even argue about it?

It's pointless.