We are at the 2017 Game Developers Conference, and were invited to one of the many enlightening tech sessions, titled "Is DirectX 12 Worth it," by Jurjen Katsman, CEO of Nixxes, a company credited with several successful PC ports of console games (Rise of the Tomb Raider, Deus Ex Mankind Divided). Over the past 18 months, DirectX 12 has become the selling point to PC gamers, of everything from Windows 10 (free upgrade) to new graphics cards, and even games, with the lack of DirectX 12 support even denting the PR of certain new AAA game launches, until the developers hashed out support for the new API through patches. Game developers are asking the dev community at large to manage their expectations from DirectX 12, with the underlying point being that it isn't a silver-bullet to all the tech limitations developers have to cope with, and that to reap all its performance rewards, a proportionate amount of effort has to be put in by developers.

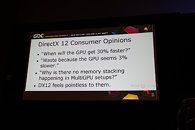

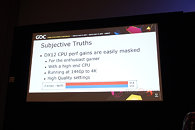

The presentation begins with the speaker talking about the disillusionment consumers have about DirectX 12, and how they're yet to see the kind of console-rivaling performance gains DirectX 12 was purported to bring. Besides lack of huge performance gains, consumers eagerly await the multi-GPU utopia that was promised to them, in which not only can you mix and match GPUs of your choice across models and brands, but also have them stack up their video memory - a theoretical possibility with by DirectX 12, but which developers argue is easier said than done, in the real world. One of the key areas where DirectX 12 is designed to improve performance is by distributing rendering overhead evenly among many CPU cores, in a multi-core CPU. For high-performance desktop users with reasonably fast CPUs, the gains are negligible. This also goes for people gaming on higher resolutions, such as 1440p and 4K Ultra HD, where the frame-rates are low, and the performance tends to be more GPU-limited.