Tuesday, February 28th 2017

Is DirectX 12 Worth the Trouble?

We are at the 2017 Game Developers Conference, and were invited to one of the many enlightening tech sessions, titled "Is DirectX 12 Worth it," by Jurjen Katsman, CEO of Nixxes, a company credited with several successful PC ports of console games (Rise of the Tomb Raider, Deus Ex Mankind Divided). Over the past 18 months, DirectX 12 has become the selling point to PC gamers, of everything from Windows 10 (free upgrade) to new graphics cards, and even games, with the lack of DirectX 12 support even denting the PR of certain new AAA game launches, until the developers hashed out support for the new API through patches. Game developers are asking the dev community at large to manage their expectations from DirectX 12, with the underlying point being that it isn't a silver-bullet to all the tech limitations developers have to cope with, and that to reap all its performance rewards, a proportionate amount of effort has to be put in by developers.

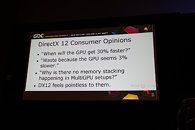

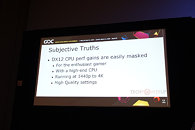

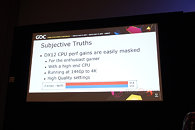

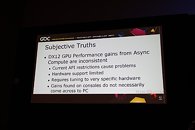

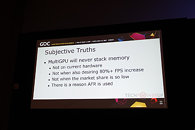

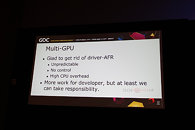

The presentation begins with the speaker talking about the disillusionment consumers have about DirectX 12, and how they're yet to see the kind of console-rivaling performance gains DirectX 12 was purported to bring. Besides lack of huge performance gains, consumers eagerly await the multi-GPU utopia that was promised to them, in which not only can you mix and match GPUs of your choice across models and brands, but also have them stack up their video memory - a theoretical possibility with by DirectX 12, but which developers argue is easier said than done, in the real world. One of the key areas where DirectX 12 is designed to improve performance is by distributing rendering overhead evenly among many CPU cores, in a multi-core CPU. For high-performance desktop users with reasonably fast CPUs, the gains are negligible. This also goes for people gaming on higher resolutions, such as 1440p and 4K Ultra HD, where the frame-rates are low, and the performance tends to be more GPU-limited.The other big feature introduced to the mainstream with DirectX 12, is asynchronous compute. There have been examples of games that take advantage of gaining more performance out of a certain brand of GPU than the other, and this is a problem, according to developers. Hardware support to async compute is limited to only the latest GPU architectures, and requires further tuning specific to the hardware. Performance gains seen on closed ecosystems such as consoles, hence, don't necessarily come over to the PC. It is therefore concluded that performance gains with async compute are inconsistent, and hence developers should manage their time on making a better game than focusing on a very specific hardware user-base.The speakers also cast aspersions on the viability of memory stacking on multi-GPU - the idea where memory of two GPUs simply add up to become one large addressable block. To begin with, the multi-GPU user-base is still far too small for developers to spend more effort than simply hashing out an AFR (alternate frame rendering) code for their games. AFR, the most common performance-improving multi-GPU method, where each GPU in a multi-GPU renders an alternative frame for the master GPU to output in sequence; requires that each GPU has a copy of the video-memory mirrored with the other GPUs. Having to fetch data from the physical memory of a neighboring GPU is a performance-costly and time-consuming process.The idea behind new-generation "close-to-the-metal" APIs such as DirectX 12 and Vulkan, has been to make graphics drivers as less relevant to the rendering pipeline as possible. The speakers contend that the drivers are still very relevant, and instead, with the advent of the new APIs, their complexities have further gone up, in the areas of memory management, manual multi-GPU (custom, non-AFR multi-GPU implementations), the underlying tech required to enable async compute, and the various performance-enhancement tricks the various driver vendors implement to make their brand of hardware faster, which in turn can mean uncertainties for the developer in cases where the driver overrides certain techniques, just to squeeze out a little bit of extra performance.

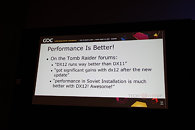

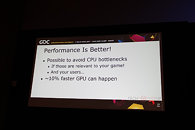

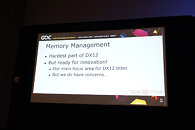

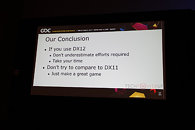

The developers revisit the question - is DirectX 12 worth it? Well, if you are willing to invest a lot of time into your DirectX 12 implementation, you could succeed, such as in case of "Rise of the Tomb Raider," in which users noticed "significant gains" with the new API (which were not trivial to achieve). They also argue that it's possible to overcome CPU bottlenecks with or without DirectX 12, if that's relevant to your game or user-base. They also concede that Async Compute is the way forward, and if not console-rivaling performance gains, it can certainly benefit the PC. They're also happy to have gotten rid of AFR multi-GPU as governed by the graphics driver, which was unpredictable and had little control (remember those pesky 400 MB driver updates just to get multi-GPU support?). The new API-governed AFR means more work for the developer, but also far greater control, which the speaker believe, will benefit the users. Another point he made is that successful porting to DirectX 12 lays a good foundation for porting to Vulkan (mostly for mobile), which uses nearly identical concepts, technologies and APIs as DX12.Memory management is the hardest part about DirectX 12, but the developer community is ready to embrace the innovation (such as mega-textures, tiled-resources, etc). The speakers conclude stating that DirectX 12 is hard, it can be worth the extra effort, but it may not be, either. Developers and consumers need to be realistic about what to expect from DirectX, and developers in particular to focus on making good games, rather than sacrificing their resources on becoming tech-demonstrators than on actual content.

The presentation begins with the speaker talking about the disillusionment consumers have about DirectX 12, and how they're yet to see the kind of console-rivaling performance gains DirectX 12 was purported to bring. Besides lack of huge performance gains, consumers eagerly await the multi-GPU utopia that was promised to them, in which not only can you mix and match GPUs of your choice across models and brands, but also have them stack up their video memory - a theoretical possibility with by DirectX 12, but which developers argue is easier said than done, in the real world. One of the key areas where DirectX 12 is designed to improve performance is by distributing rendering overhead evenly among many CPU cores, in a multi-core CPU. For high-performance desktop users with reasonably fast CPUs, the gains are negligible. This also goes for people gaming on higher resolutions, such as 1440p and 4K Ultra HD, where the frame-rates are low, and the performance tends to be more GPU-limited.The other big feature introduced to the mainstream with DirectX 12, is asynchronous compute. There have been examples of games that take advantage of gaining more performance out of a certain brand of GPU than the other, and this is a problem, according to developers. Hardware support to async compute is limited to only the latest GPU architectures, and requires further tuning specific to the hardware. Performance gains seen on closed ecosystems such as consoles, hence, don't necessarily come over to the PC. It is therefore concluded that performance gains with async compute are inconsistent, and hence developers should manage their time on making a better game than focusing on a very specific hardware user-base.The speakers also cast aspersions on the viability of memory stacking on multi-GPU - the idea where memory of two GPUs simply add up to become one large addressable block. To begin with, the multi-GPU user-base is still far too small for developers to spend more effort than simply hashing out an AFR (alternate frame rendering) code for their games. AFR, the most common performance-improving multi-GPU method, where each GPU in a multi-GPU renders an alternative frame for the master GPU to output in sequence; requires that each GPU has a copy of the video-memory mirrored with the other GPUs. Having to fetch data from the physical memory of a neighboring GPU is a performance-costly and time-consuming process.The idea behind new-generation "close-to-the-metal" APIs such as DirectX 12 and Vulkan, has been to make graphics drivers as less relevant to the rendering pipeline as possible. The speakers contend that the drivers are still very relevant, and instead, with the advent of the new APIs, their complexities have further gone up, in the areas of memory management, manual multi-GPU (custom, non-AFR multi-GPU implementations), the underlying tech required to enable async compute, and the various performance-enhancement tricks the various driver vendors implement to make their brand of hardware faster, which in turn can mean uncertainties for the developer in cases where the driver overrides certain techniques, just to squeeze out a little bit of extra performance.

The developers revisit the question - is DirectX 12 worth it? Well, if you are willing to invest a lot of time into your DirectX 12 implementation, you could succeed, such as in case of "Rise of the Tomb Raider," in which users noticed "significant gains" with the new API (which were not trivial to achieve). They also argue that it's possible to overcome CPU bottlenecks with or without DirectX 12, if that's relevant to your game or user-base. They also concede that Async Compute is the way forward, and if not console-rivaling performance gains, it can certainly benefit the PC. They're also happy to have gotten rid of AFR multi-GPU as governed by the graphics driver, which was unpredictable and had little control (remember those pesky 400 MB driver updates just to get multi-GPU support?). The new API-governed AFR means more work for the developer, but also far greater control, which the speaker believe, will benefit the users. Another point he made is that successful porting to DirectX 12 lays a good foundation for porting to Vulkan (mostly for mobile), which uses nearly identical concepts, technologies and APIs as DX12.Memory management is the hardest part about DirectX 12, but the developer community is ready to embrace the innovation (such as mega-textures, tiled-resources, etc). The speakers conclude stating that DirectX 12 is hard, it can be worth the extra effort, but it may not be, either. Developers and consumers need to be realistic about what to expect from DirectX, and developers in particular to focus on making good games, rather than sacrificing their resources on becoming tech-demonstrators than on actual content.

72 Comments on Is DirectX 12 Worth the Trouble?

Unless a game is build from ground up to use D3D 12, no significant performance gains can be seen compared with D3D 11.

Besides, so far, Vulkan seems a more mature and better API, and personally I am fully supporting it, even only for the fact that is multi-platform, or can be ported to any platform, compared to DirectX(D3D) 12 which is Windows 10 only (pff)

To make DX12 hardware agnostic requires far greater effort than has been shown so far. You can code Async for AMD but you also need to code it to suit Nvidia.

Lots of work involved.

During GDC 2017 the CEO of Nixxes, Jurjen Katsman, talked about his experience in porting games from DirectX 11 to DirectX 12. Nixxes is the developer responsible for the DirectX 12 render paths of Deus Ex: Mankind Divided and Rise of the Tomb Raider.

No they f**king aren't. You game dev moppets do that with idiotic design decisions. Instead of leaving the 30% gain to the user in terms of higher framerate, expanding super playable game to larger audience, you add some bullshit eye candy that consumes 40% of the gain with maybe 1% added visual fidelity. I keep on ranting about tessellation for example where they use it backwards by cramming gazillion polygons in places where they don't matter, draining performance while not improving anything, just making it worse. Instead, they could make games the way they look now and REMOVE polygons where they don't matter (you know, how LOD works, just worse coz it has massive transitions between models), gaining performance and sacrificing visual fidelity where it doesn't matter anyway. And yet, they still don't get it after all these years and they keep on doing this nonsense with every new performance gaining tech. Stop wasting resources obtained from gains and wasting them where they don't matter at all!

The DX12 situation is the same. They gain 30% and then they are like wow, look at this gain, lets add this cool stuff you can only see with microscope, but it'll make a 50% performance penalty, but whatever. Excellent idea! F**k me.

My 980ti with fewer cores but faster clocks than a FuryX matches it in DX12 titles. In DX11, my card has the same performance but the FuryX drops the ball.

Nvidia architecture is already well suited to the task at hand, whereas AMD was held back by its architecture complexity in DX11.

Given the design and engineering experience of ATI/AMD and the hardware contained it's more relevant that AMD has not been performing as the hardware suggested, whereas Nvidia has. DX12 has allowed AMD to reach parity. It's not that Nvidia do worse.

And FWIW, if you read about DX12, the coding is highly particular to the hardware architecture. If you code with AMD in mind, Nvidia usually drops a fraction. If you code with Nvidia in mind, AMD sees little improvement.

There are dev guidelines from both camps on how to correctly code extensions and paths to suit each architecture.

And if you really want to say Nvidia can't do DX12, what are the fastest 2-3 single gfx cards right now?

But I don't identify myself with the 2nd sentence:

"Over the past 18 months, DirectX 12 has become the selling point to PC gamers, of everything from Windows 10 (free upgrade) to new graphics cards, and even games, with the lack of DirectX 12 support even denting the PR of certain new AAA game launches"

I have not bought games because of DX12

I have not upgraded to W10 for DX12

I have not upgrade GPU for DX12

I have not rejected games because of its lack of DX12

Because... DX11 also was so vapour, "it was huge" ;-)

Not German...

www.ocaholic.ch/modules/smartsection/item.php?itemid=4052&page=9

Unfortunately not the best spread of titles but they at least have Ashes... Unfortunately Doom is open GL which is unfortunate, but we'll give that to the Fury X over an overclocked 980ti.

We are stuck with Microsoft and Windows due to it.

When Direct 3D will work on Linux and on something else than X86, than it will become a great API.

- "We're tied too much to the vendor drivers... If only we had more liberty with memory... If only we had more direct access to the process..."

current times:

- "Don't get your hopes up, this is way too much work... I don't think many of us will bother..."

Really?... :rolleyes:So what? It's been precisely the definition of PC Gaming for decades: "new tech = new hardware ---> upgrades".

Why should it be any different now? It's what makes PC a great platform: It moves forward.

What the hell is wrong with the industry nowadays...

Using DX12 and async, a Fury X performs better than a 1070 at 4K, and a R9 390X reaches the 980Ti performances.

www.overclockers.ua/video/directx-12-amd-vs-nvidia/all/

There's nothing inherently wrong with the industry, but I can tell you once spoiled with high-level APIs (and productivity), many developers simply refuse to go back. (It's not a perfect analogy, but I'm the red headed step child in the office because I use the command line, whereas everybody else just presses buttons in the IDE.)

www.amd.com/pt-br/innovations/software-technologies/directx12

www.geforce.com/hardware/technology/dx12

And even Microsoft

msdn.microsoft.com/en-us/library/windows/desktop/dn899121(v=vs.85).aspx

What is Direct3D 12?

DirectX 12 introduces the next version of Direct3D,