Futuremark Showcases DirectX Raytracing Demo, Teases Upcoming 3D Benchmark Test

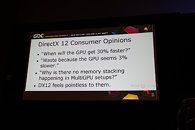

DirectX Raytracing (DXR) is a new feature in DirectX 12 that opens the door to a new class of real-time graphics techniques for games. We were thrilled to join Microsoft onstage for the announcement, which we followed with a presentation of our own work in developing practical real-time applications for this exciting new tech.

Accurate real-time reflections with DirectX Raytracing

Rendering accurate reflections in real-time is difficult. There are many challenges and limitations when using the existing methods. For the past few months, we've been exploring ways of combining DirectX Raytracing with existing methods to solve some of these challenges. While much of our presentation went deep into the math for our solution, I would like to show you some examples of our new technique in action.

Accurate real-time reflections with DirectX Raytracing

Rendering accurate reflections in real-time is difficult. There are many challenges and limitations when using the existing methods. For the past few months, we've been exploring ways of combining DirectX Raytracing with existing methods to solve some of these challenges. While much of our presentation went deep into the math for our solution, I would like to show you some examples of our new technique in action.