Thursday, March 19th 2015

AMD Announces FreeSync, Promises Fluid Displays More Affordable than G-SYNC

AMD today officially announced FreeSync, an open-standard technology that makes video and games look more fluid on PC monitors, with fluctuating frame-rates. A logical next-step to V-Sync, and analogous in function to NVIDIA's proprietary G-SYNC technology, FreeSync is a dynamic display refresh-rate technology that lets monitors sync their refresh-rate to the frame-rate the GPU is able to put out, resulting in a fluid display output.

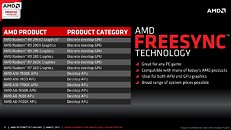

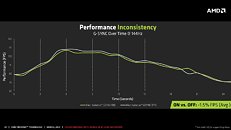

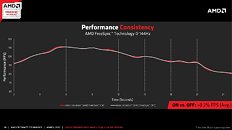

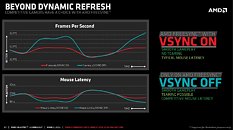

FreeSync is an evolution of V-Sync, a feature that syncs the frame-rate of the GPU to the display's refresh-rate, to prevent "frame tearing," when the frame-rate is higher than refresh-rate; but it is known to cause input-lag and stutter when the GPU is not able to keep up with refresh-rate. FreeSync works on both ends of the cable, keeping refresh-rate and frame-rates in sync, to fight both page-tearing and input-lag.What makes FreeSync different from NVIDIA G-SYNC is that it's a specialization of a VESA-standard feature by AMD, which is slated to be a part of the DisplayPort feature-set, and advanced by DisplayPort 1.2a standard, featured currently only on AMD Radeon GPUs, and Intel's upcoming "Broadwell" integrated graphics. Unlike G-SYNC, FreeSync does not require any proprietary hardware, and comes with no licensing fees. When monitor manufacturers support DP 1.2a, they don't get to pay a dime to AMD. There's no special hardware involved in supporting FreeSync, either, just support for the open-standard and royalty-free DP 1.2a.AMD announced that no less than 12 monitors from major display manufacturers are already announced or being announced shortly, with support for FreeSync. A typical 27-inch display with TN-film panel, 40-144 Hz refresh-rate range, and WQHD (2560 x 1440 pixels) resolution, such as the Acer XG270HU, should cost US $499. You also have Ultra-Wide 2K (2560 x 1080 pixels) 34-inch and 29-inch monitors, such as the LG xUM67 series, start at $599. These displays offer refresh-rates of up to 75 Hz. Samsung is leading the 4K Ultra HD pack for FreeSync, with the UE590 series 24-inch and 28-inch, and UE850 series 24-inch, 28-inch, and 32-inch Ultra HD (3840 x 2160 pixels) monitors, offering refresh-rates of up to 60 Hz. ViewSonic is offering a full-HD (1920 x 1080 pixels) 27-incher, the VX2701mh, with refresh-rates of up to 144 Hz. On the GPU-end, FreeSync is currently supported on Radeon R9 290 series (R9 290, R9 290X, R9 295X2), R9 285, R7 260X, R7 260, and AMD "Kaveri" APUs. Intel's Core M processors should, in theory, support FreeSync, as its integrated graphics supports DisplayPort 1.2a.On the performance side of things, AMD claims that FreeSync has lesser performance penalty compared to NVIDIA G-SYNC, and has more performance consistency. The company put out a few of its own benchmarks to make that claim.For AMD GPUs, the company will add support for FreeSync with the upcoming Catalyst 15.3 drivers.

FreeSync is an evolution of V-Sync, a feature that syncs the frame-rate of the GPU to the display's refresh-rate, to prevent "frame tearing," when the frame-rate is higher than refresh-rate; but it is known to cause input-lag and stutter when the GPU is not able to keep up with refresh-rate. FreeSync works on both ends of the cable, keeping refresh-rate and frame-rates in sync, to fight both page-tearing and input-lag.What makes FreeSync different from NVIDIA G-SYNC is that it's a specialization of a VESA-standard feature by AMD, which is slated to be a part of the DisplayPort feature-set, and advanced by DisplayPort 1.2a standard, featured currently only on AMD Radeon GPUs, and Intel's upcoming "Broadwell" integrated graphics. Unlike G-SYNC, FreeSync does not require any proprietary hardware, and comes with no licensing fees. When monitor manufacturers support DP 1.2a, they don't get to pay a dime to AMD. There's no special hardware involved in supporting FreeSync, either, just support for the open-standard and royalty-free DP 1.2a.AMD announced that no less than 12 monitors from major display manufacturers are already announced or being announced shortly, with support for FreeSync. A typical 27-inch display with TN-film panel, 40-144 Hz refresh-rate range, and WQHD (2560 x 1440 pixels) resolution, such as the Acer XG270HU, should cost US $499. You also have Ultra-Wide 2K (2560 x 1080 pixels) 34-inch and 29-inch monitors, such as the LG xUM67 series, start at $599. These displays offer refresh-rates of up to 75 Hz. Samsung is leading the 4K Ultra HD pack for FreeSync, with the UE590 series 24-inch and 28-inch, and UE850 series 24-inch, 28-inch, and 32-inch Ultra HD (3840 x 2160 pixels) monitors, offering refresh-rates of up to 60 Hz. ViewSonic is offering a full-HD (1920 x 1080 pixels) 27-incher, the VX2701mh, with refresh-rates of up to 144 Hz. On the GPU-end, FreeSync is currently supported on Radeon R9 290 series (R9 290, R9 290X, R9 295X2), R9 285, R7 260X, R7 260, and AMD "Kaveri" APUs. Intel's Core M processors should, in theory, support FreeSync, as its integrated graphics supports DisplayPort 1.2a.On the performance side of things, AMD claims that FreeSync has lesser performance penalty compared to NVIDIA G-SYNC, and has more performance consistency. The company put out a few of its own benchmarks to make that claim.For AMD GPUs, the company will add support for FreeSync with the upcoming Catalyst 15.3 drivers.

96 Comments on AMD Announces FreeSync, Promises Fluid Displays More Affordable than G-SYNC

24-25 inch size

IPS/AHVA/PLS display panel

WQHD resolution

120-144 Hz refresh rate

1 ms response time

AdaptiveSync support

no PWM flickering

(quasi) bezelless design

Come on LG.Display/Samsung/AU.Optronics I know you can do it!

www.pcper.com/reviews/Graphics-Cards/Mobile-G-Sync-Confirmed-and-Tested-Leaked-Alpha-DriverI don't see anything that you can actually disagree.

I am not writing any opinion.

What I am saying is that nVidia is not telling you that G-sync is out there with a different name.

They have not invented the wheel, they are trying to re-invent it and charge you for it.

The part about GCN or GM1xx or GM2xx or some vliw architecture to support some-Sync technology goes like this:

If the eDP vX.X is supported by some GPU, then that GPU is able to support this some-Sync.

Now, the DisplayPort that you have on your monitor is very different from the eDP. The standard make the adaptive refresh rate optional which means that noone bothered to adjust it to their monitors and gpus.

On the other hand, eDP is something that can save you a lot of power by forcing the makers to give you control over the refresh rate of your screen. It is not the same as DP but it is NOT optional. It has more features because it is on special devices.

for example, fec (forward error correction) is optional on the 802.3-2008 IEEE standard, but no-one bothers to support it except a few companies that sell IPs or h/w in research centers or data centers... not to customers like you and me.

I'll wait until 40" HDTV's have it as i have no interest going back to a lil monitor any time soon, by that time hopefully shit will have matured.

Last but least i am Nvidia user, but hate when they make everything closed like PhysX, Gsync

'Currently own 750ti hope it will soon get adaptive sync as the new mobile gpus of Nvidia got. :lovetpu:

Its the monitors that dont go that low.

Televisions I think all go down to 24 fps

Yeah,thank you AMD for bring us some affordable technologies :toast:

Goodluck for G-Sync though...:roll:

Now I come to find out that you have to purchase a monitor where they've baked adaptive v-sync support into the Display port 1.2 connection.

- Lastly, this advanced adaptive v-sync craze, is really aimed at the mainstream gamer who knows very little about frame rendering times and frame latency. The problem is that frame latency, frame time and other variables of frame rending mechanics, is only now being discussed in GPU and performance reviews.

It's not common knowledge, and I question how they expect to sell people on the idea that FreeSync makes your game smoother, when most people didn't even notice their gaming was 'unsmooth' to begin with.Additionally, FreeSync is far from automatic at the driver level. If a lot of people still struggle to know or find their Catalyst Control Panel...or what a monitor OSD is, where does that leave them when trying to fiddle about setting up FreeSync?

Once you do some research and learn a bit about how an image is rendered, you find out that for the most part, you can achieve the same affect as Gsync/Freesync, without needing to buy additional hardware.

Making use of tools such as Radeon Pro, RTSS and CRU (to create custom resolutions), is the key to helping you achieve a much smoother experience - all while using the same hardware you currently own.

Setting up a custom monitor resolution and/or capping your frame rate, takes about the same amount of time as it does to enable FreeSync.

The former option is all free, the second is costly.

EDIT: In addition to what I posted, the arguments over market share are laughable. This 'technology' is not game breaking. While I wouldn't call it a gimmick, it's not some new architecture that will take us into a grand age of computer graphics and performance.

It's a bonus feature if nothing else and not every monitor, GPU or APU is going to support it.

Latency from v-sync is such a fake issue when playing games online and a non issue when you're playing single player games. If people really cared so much that they had to turn off v-sync because of the latency they choose their monitors more carefully to take a look at overall monitor latency along with steps to reduce overall system latency, meaning turning off things in bios etc.

The issue was keeping sync without the stutter of 15,30,60 fps locks adaptive vsync debut by nvidia gave you sync when you could afford it turned it off when it would probably cause stutters a minor solution. G-sync, freesync and adaptive sync(really confusing name choice imo) are solutions with greater scope.

Customer: Can I, please?

:D

I'm hoping you'll be able to get a 390 w/ a 1440p Freesync capable monitor for $1000.