Monday, March 23rd 2015

AMD Bets on DirectX 12 for Not Just GPUs, but Also its CPUs

In an industry presentation on why the company is excited about Microsoft's upcoming DirectX 12 API, AMD revealed its most important feature that could impact on not only its graphics business, but also potentially revive its CPU business among gamers. DirectX 12 will make its debut with Windows 10, Microsoft's next big operating system, which will be given away as a free upgrade for _all_ current Windows 8 and Windows 7 users. The OS will come with a usable Start menu, and could lure gamers who stood their ground on Windows 7.

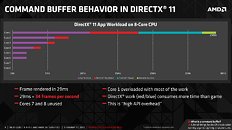

In its presentation, AMD touched upon two key features of the DirectX 12, starting with its most important, Multi-threaded command buffer recording; and Asynchronous compute scheduling/execution. A command buffer is a list of tasks for the CPU to execute, when drawing a 3D scene. There are some elements of 3D graphics that are still better suited for serial processing, and no single SIMD unit from any GPU architecture has managed to gain performance throughput parity with a modern CPU core. DirectX 11 and its predecessors are still largely single-threaded on the CPU, in the way it schedules command buffer.A graph from AMD on how a DirectX 11 app spreads CPU load across an 8-core CPU reveals how badly optimized the API is, for today's CPUs. The API and driver code is executed almost entirely on one core, and this is something that's bad for even dual- and quad-core CPUs (if you fundamentally disagree with AMD's "more cores" strategy). Overloading fewer cores with more API and driver-related serial workload makes up the "high API overhead" issue that AMD believes is holding back PC graphics efficiency compared to consoles, and it has a direct and significant impact on frame-rates.DirectX 12 heralds a truly multi-threaded command buffer pathway, which scales up with any number of CPU cores you throw at it. Driver and API workloads are split evenly between CPU cores, significantly reducing API overhead, resulting in huge frame-rate increases. How big that increase is in the real-world, remains to be seen. AMD's own Mantle API addresses this exact issue with DirectX 11, and offers a CPU-efficient way of rendering. Its performance-yields are significant on GPU-limited scenarios such as APUs, but on bigger setups (eg: high-end R9 290 series graphics, high resolutions), the performance gains though significant, are not mind-blowing. In some scenarios, Mantle offered the difference between "slideshow" and "playable." Cynics have to give DirectX 12 the benefit of the doubt. It could end up doing a better job than even Mantle, at pushing paper through multi-core CPUs.

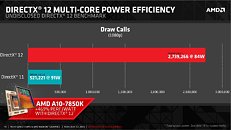

AMD's own presentation appears to agree with the way Mantle played out in the real world (benefits for APUs vs. high-end GPUs). A slide highlights how DirectX 12 and its new multi-core efficiency could step up draw-call capacity of an A10-7850K by over 450 percent. Sufficed to say, DirectX 12 will be a boon for smaller, cheaper mid-range GPUs, and make PC gaming more attractive for the gamer crowd at large. The fine-grain asynchronous compute-scheduling/execution, is another feature to look out for. It breaks down complex serial workloads into smaller, parallel tasks. It will also ensure that unused GPU resources are put to work on these smaller parallel tasks.So where does AMD fit in all of this? DirectX 12 support will no doubt help AMD sell GPUs. Like NVIDIA, AMD has preemptively announced DirectX 12 API support on all its GPUs based on the Graphics CoreNext architecture (Radeon HD 7000 series and above). AMD's real takeaway from DirectX 12 will be how its cheap 8-core socket AM3+ CPUs could gain tons of value overnight. The notion that "games don't use >4 CPU cores" will dramatically change. Any DirectX 12 game will split its command buffer and API loads between any number of CPU cores you throw at it. AMD sells you 8-core CPUs for as low as $170 (the FX-8320). Intel's design strategy of placing stronger but fewer cores on its client processors, could face its biggest challenge with DirectX 12.

In its presentation, AMD touched upon two key features of the DirectX 12, starting with its most important, Multi-threaded command buffer recording; and Asynchronous compute scheduling/execution. A command buffer is a list of tasks for the CPU to execute, when drawing a 3D scene. There are some elements of 3D graphics that are still better suited for serial processing, and no single SIMD unit from any GPU architecture has managed to gain performance throughput parity with a modern CPU core. DirectX 11 and its predecessors are still largely single-threaded on the CPU, in the way it schedules command buffer.A graph from AMD on how a DirectX 11 app spreads CPU load across an 8-core CPU reveals how badly optimized the API is, for today's CPUs. The API and driver code is executed almost entirely on one core, and this is something that's bad for even dual- and quad-core CPUs (if you fundamentally disagree with AMD's "more cores" strategy). Overloading fewer cores with more API and driver-related serial workload makes up the "high API overhead" issue that AMD believes is holding back PC graphics efficiency compared to consoles, and it has a direct and significant impact on frame-rates.DirectX 12 heralds a truly multi-threaded command buffer pathway, which scales up with any number of CPU cores you throw at it. Driver and API workloads are split evenly between CPU cores, significantly reducing API overhead, resulting in huge frame-rate increases. How big that increase is in the real-world, remains to be seen. AMD's own Mantle API addresses this exact issue with DirectX 11, and offers a CPU-efficient way of rendering. Its performance-yields are significant on GPU-limited scenarios such as APUs, but on bigger setups (eg: high-end R9 290 series graphics, high resolutions), the performance gains though significant, are not mind-blowing. In some scenarios, Mantle offered the difference between "slideshow" and "playable." Cynics have to give DirectX 12 the benefit of the doubt. It could end up doing a better job than even Mantle, at pushing paper through multi-core CPUs.

AMD's own presentation appears to agree with the way Mantle played out in the real world (benefits for APUs vs. high-end GPUs). A slide highlights how DirectX 12 and its new multi-core efficiency could step up draw-call capacity of an A10-7850K by over 450 percent. Sufficed to say, DirectX 12 will be a boon for smaller, cheaper mid-range GPUs, and make PC gaming more attractive for the gamer crowd at large. The fine-grain asynchronous compute-scheduling/execution, is another feature to look out for. It breaks down complex serial workloads into smaller, parallel tasks. It will also ensure that unused GPU resources are put to work on these smaller parallel tasks.So where does AMD fit in all of this? DirectX 12 support will no doubt help AMD sell GPUs. Like NVIDIA, AMD has preemptively announced DirectX 12 API support on all its GPUs based on the Graphics CoreNext architecture (Radeon HD 7000 series and above). AMD's real takeaway from DirectX 12 will be how its cheap 8-core socket AM3+ CPUs could gain tons of value overnight. The notion that "games don't use >4 CPU cores" will dramatically change. Any DirectX 12 game will split its command buffer and API loads between any number of CPU cores you throw at it. AMD sells you 8-core CPUs for as low as $170 (the FX-8320). Intel's design strategy of placing stronger but fewer cores on its client processors, could face its biggest challenge with DirectX 12.

87 Comments on AMD Bets on DirectX 12 for Not Just GPUs, but Also its CPUs

Hyperbole doesn't help.

Dx12 looks good but the games are not there yet despite mantel at least nudging some devs that way, adoption wont be rapid.

Mantle was meant to push Microsoft to move faster on DX12. Mantle and DX12 from the beginning was going to minimize the distance between Intel and AMD CPUs in games that where poorly written. GPU performance with Mantle was always a secondary bonus, as long as Nvidia was sticking with DX11. Now that Nvidia is benefiting from DX12, it is a question mark if AMD will gain more from GCN compared to the performance gains of Maxwell with DX12. Anandtech's DX12 benchmarks show that GCN 1.1 is not as good as Maxwell in 900 series under DX12.

I feel like fooled by microsoft and intel with their DX11 and below, so WTH is going on from past time really happened?

So there is or already fishy thing between microft and intel before mantle came out? and micro push out the DX12 to avoid DX ashamed by mantle?

Can't wait what can be do FX8350 or FX9590 with DX12.

Is it gonna make any significant improvements in terms of FPS to FC4 and Crysis 3 and the like?

The power consumption is still an issue, right? Or, are you saying that the cheapest APUs out there are good-enough to beat an economical Intel system with a nice-enough discrete GPU ?

www.techpowerup.com/img/15-03-23/100j.jpg

I do find that slide kinda funny and bit misrepresented. 470% performance per watt increase sounds good in the slide but doesn't translate in to 470% higher fps. Its a bit of a misleading slide for people that are not as tech savvy.

I remember years ago running Half-Life 1 on a lowly Compaq D510 (Pentium 4 2.0GHz, 845G chipset) with a low end GeForce 6200 AGP plugged into it. Using DirectX (DX7?) the game managed about 35-40fps and looked very jerky and hitchy indeed. Using OpenGL it managed about 60-80fps and looked pretty fluid. I'm not joking, it was as marked as that.

Now, DX11 is much more efficient, but it still has room for significant improvement to reduce overhead as the likes of Mantle show.

Now, some people may think, well, I've go a really powerful system so efficiency isn't very important, but that's where they're wrong. With gaming you can never have too much power as something will always come along to drag down the framerate and keeping that framerate up is the whole reason to spend big bucks on high end gaming rigs.

If you subscribe to the AMD HTT camp, then this explains perfectly why a "quad" APU paces with a dual i3.

More interested in seeing the eventual FX vs i5/i7 benches. If they show similar results that the dual module APUs deliver then it'll be interesting.