Thursday, November 21st 2019

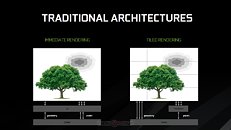

NVIDIA Develops Tile-based Multi-GPU Rendering Technique Called CFR

NVIDIA is invested in the development of multi-GPU, specifically SLI over NVLink, and has developed a new multi-GPU rendering technique that appears to be inspired by tile-based rendering. Implemented at a single-GPU level, tile-based rendering has been one of NVIDIA's many secret sauces that improved performance since its "Maxwell" family of GPUs. 3DCenter.org discovered that NVIDIA is working on its multi-GPU avatar, called CFR, which could be short for "checkerboard frame rendering," or "checkered frame rendering." The method is already secretly deployed on current NVIDIA drivers, although not documented for developers to implement.

In CFR, the frame is divided into tiny square tiles, like a checkerboard. Odd-numbered tiles are rendered by one GPU, and even-numbered ones by the other. Unlike AFR (alternate frame rendering), in which each GPU's dedicated memory has a copy of all of the resources needed to render the frame, methods like CFR and SFR (split frame rendering) optimize resource allocation. CFR also purportedly offers lesser micro-stutter than AFR. 3DCenter also detailed the features and requirements of CFR. To begin with, the method is only compatible with DirectX (including DirectX 12, 11, and 10), and not OpenGL or Vulkan. For now it's "Turing" exclusive, since NVLink is required (probably its bandwidth is needed to virtualize the tile buffer). Tools like NVIDIA Profile Inspector allow you to force CFR on provided the other hardware and API requirements are met. It still has many compatibility problems, and remains practically undocumented by NVIDIA.

Source:

3DCenter.org

In CFR, the frame is divided into tiny square tiles, like a checkerboard. Odd-numbered tiles are rendered by one GPU, and even-numbered ones by the other. Unlike AFR (alternate frame rendering), in which each GPU's dedicated memory has a copy of all of the resources needed to render the frame, methods like CFR and SFR (split frame rendering) optimize resource allocation. CFR also purportedly offers lesser micro-stutter than AFR. 3DCenter also detailed the features and requirements of CFR. To begin with, the method is only compatible with DirectX (including DirectX 12, 11, and 10), and not OpenGL or Vulkan. For now it's "Turing" exclusive, since NVLink is required (probably its bandwidth is needed to virtualize the tile buffer). Tools like NVIDIA Profile Inspector allow you to force CFR on provided the other hardware and API requirements are met. It still has many compatibility problems, and remains practically undocumented by NVIDIA.

33 Comments on NVIDIA Develops Tile-based Multi-GPU Rendering Technique Called CFR

www.forum-3dcenter.org/vbulletin/showpost.php?p=12144578&postcount=3586

Not a fun reality, I realize, but mGPU in DX12 was not an answer, more a copout and passing of the issue to devs.