Monday, February 18th 2019

AMD Radeon VII Retested With Latest Drivers

Just two weeks ago, AMD released their Radeon VII flagship graphics card. It is based on the new Vega 20 GPU, which is the world's first graphics processor built using a 7 nanometer production process. Priced at $699, the new card offers performance levels 20% higher than Radeon RX Vega 64, which should bring it much closer to NVIDIA's GeForce RTX 2080. In our testing we still saw a 14% performance deficit compared to RTX 2080. For the launch-day reviews AMD provided media outlets with a press driver dated January 22, 2019, which we used for our review.

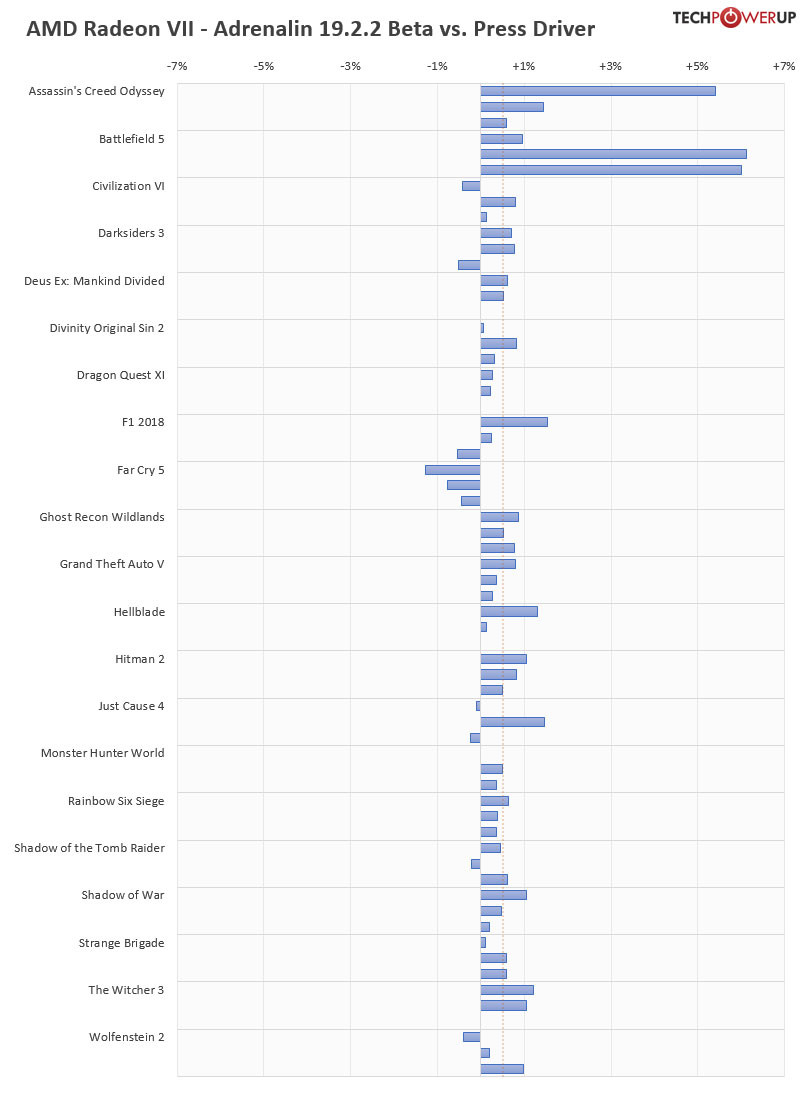

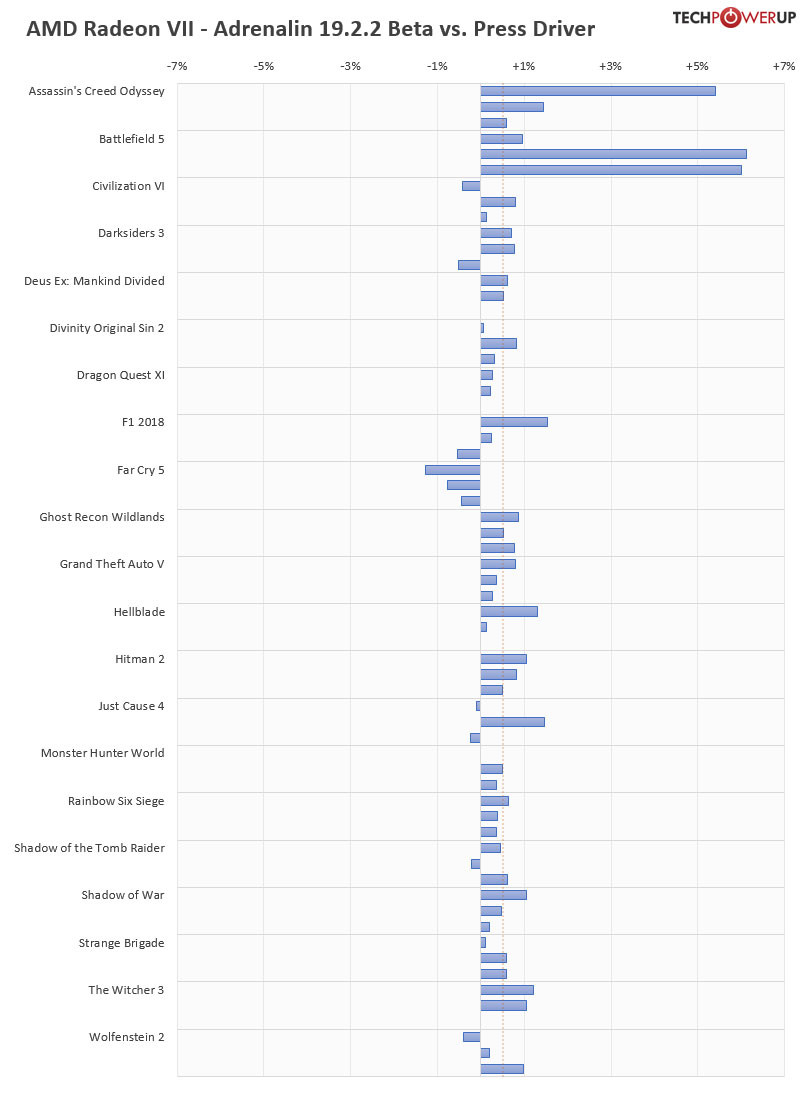

Since the first reviews went up, people in online communities have been speculating that these were early drivers and that new drivers will significantly boost the performance of Radeon VII, to make up lost ground over RTX 2080. There's also the mythical "fine wine" phenomenon where performance of Radeon GPUs significantly improve over time, incrementally. We've put these theories to the test by retesting Radeon VII using AMD's latest Adrenalin 2019 19.2.2 drivers, using our full suite of graphics card benchmarks.In the chart below, we show the performance deltas compared to our original review, for each title three resolutions are tested: 1920x1080, 2560x1440, 3840x2160 (in that order).

Please do note that these results include performance gained by the washer mod and thermal paste change that we had to do when reassembling of the card. These changes reduced hotspot temperatures by around 10°C, allowing the card to boost a little bit higher. To verify what performance improvements were due to the new driver, and what was due to the thermal changes, we first retested the card using the original press driver (with washer mod and TIM). The result was +0.2% improved performance.

Using the latest 19.2.2 drivers added +0.45% on top of that, for a total improvement of +0.653%. Taking a closer look at the results we can see that two specific titles have seen significant gains due to the new driver version. Assassin's Creed Odyssey, and Battlefield V both achieve several-percent improvements, looks like AMD has worked some magic in those games, to unlock extra performance. The remaining titles see small, but statistically significant gains, suggesting that there are some "global" tweaks that AMD can implement to improve performance across the board, but unsurprisingly, these gains are smaller than title-specific optimizations.

Looking further ahead, it seems plausible that AMD can increase performance of Radeon VII down the road, even though we have doubts that enough optimizations can be discovered to match RTX 2080, maybe if suddenly a lot of developers jump on the DirectX 12 bandwagon (which seems unlikely). It's also a question of resources, AMD can't waste time and money to micro-optimize every single title out there. Rather the company seems to be doing the right thing: invest into optimizations for big, popular titles, like Battlefield V and Assassin's Creed. Given how many new titles are coming out using Unreal Engine 4, and how much AMD is lagging behind in those titles, I'd focus on optimizations for UE4 next.

Since the first reviews went up, people in online communities have been speculating that these were early drivers and that new drivers will significantly boost the performance of Radeon VII, to make up lost ground over RTX 2080. There's also the mythical "fine wine" phenomenon where performance of Radeon GPUs significantly improve over time, incrementally. We've put these theories to the test by retesting Radeon VII using AMD's latest Adrenalin 2019 19.2.2 drivers, using our full suite of graphics card benchmarks.In the chart below, we show the performance deltas compared to our original review, for each title three resolutions are tested: 1920x1080, 2560x1440, 3840x2160 (in that order).

Please do note that these results include performance gained by the washer mod and thermal paste change that we had to do when reassembling of the card. These changes reduced hotspot temperatures by around 10°C, allowing the card to boost a little bit higher. To verify what performance improvements were due to the new driver, and what was due to the thermal changes, we first retested the card using the original press driver (with washer mod and TIM). The result was +0.2% improved performance.

Using the latest 19.2.2 drivers added +0.45% on top of that, for a total improvement of +0.653%. Taking a closer look at the results we can see that two specific titles have seen significant gains due to the new driver version. Assassin's Creed Odyssey, and Battlefield V both achieve several-percent improvements, looks like AMD has worked some magic in those games, to unlock extra performance. The remaining titles see small, but statistically significant gains, suggesting that there are some "global" tweaks that AMD can implement to improve performance across the board, but unsurprisingly, these gains are smaller than title-specific optimizations.

Looking further ahead, it seems plausible that AMD can increase performance of Radeon VII down the road, even though we have doubts that enough optimizations can be discovered to match RTX 2080, maybe if suddenly a lot of developers jump on the DirectX 12 bandwagon (which seems unlikely). It's also a question of resources, AMD can't waste time and money to micro-optimize every single title out there. Rather the company seems to be doing the right thing: invest into optimizations for big, popular titles, like Battlefield V and Assassin's Creed. Given how many new titles are coming out using Unreal Engine 4, and how much AMD is lagging behind in those titles, I'd focus on optimizations for UE4 next.

182 Comments on AMD Radeon VII Retested With Latest Drivers

I tested various driver version to my gpu and get it benched. pfftt..

- Assassin's Creed, Battlefield, Civilization, Far Cry, GTA, Just Cause, Tomb Raider, Witcher and Wolfenstein need to be in the list as the latest iteration of a long-running game series along with its engine. Same applies to Hitman and possibly F1.

- Strange Brigade as a game is one-off but its engine in a newer iteration fo the one behind Sniper Elite 4 which is one of the best DX12 implementations to date.

- Divinity: Original Sin 2, Monster Hunter and Shadow of War are bit of one-offs as relevant and popular games running unique engines.

- Hellblade is a game that is artistically important and actually has a good implementation of Unreal Engine 4.

- R6: Siege is unique case as despite its release year it is current and competitive game that is fairly heavy on GPUs.

- Deus Ex: Mankind Divided is a bit of a concession to AMD and DX12. This is a modified version of same Glacier 2 engine that is behind Hitman games.

- I am not too sure about the choice or relevance of Darksiders 3, Dragon Quest XI and Wildlands. Latest big UE4 releases and one of UbiSoft's non Assassin's Creed openworld games?

It is not productive to color games based on whether they use Nvidia GameWorks. The main problem with GameWorks as far as NVidia vs AMD is concerned was that it is closed source, making it impossible for AMD to optimize for it if needed. GameWorks has been open source since 2016 or so. AMD does not have a branded program in the same way, GPUOpen and tools-effects provided in it are non-branded but are present in a lot of games.Wolfenstein II is idTech6. Fixed. Thanks.

*there's no performance degradation for 780ti,there's very slight improvement.

*780ti vs 290x on 2016 drivers - 780ti wins in 18 runs out of 28,290x in 10 out of 28.

www.amd.com/en/gaming/featured-games

www.nvidia.com/en-us/geforce/games/latest-pc-games/#gameslist

I doubt W1zzard had that in mind or checked it when choosing games but there are 6 games benchmarked from both vendors' featured list and the games were not what I really expected:

AMD: Assassin's Creed Odyssey, Civilization VI, Deus Ex: Mankind Divided, Far Cry 5, Grand Theft Auto V, Strange Brigade.

Nvidia: Battlefield V, Darksiders 3, Divinity Original Sin II, Dragon Quest XI, Monster Hunter World, Shadow of the Tomb Raider.

:)

1060 and 980 were in the same level of performance back in 2016 and they are in 2019.

Nothing has changed (except for games that need more than 4GB of VRAM).

I don't think someone who spends 700$ on a card even cares about its performance after 3~ years or so, high-end owner needs high-end performance and upgrades sooner than mid-range user in general.

They had a 7970 with 3GB VRAM to compete with GTX 670/680 2GB.

And later they had a 290X with 4GB VRAM to compete with GTX 780/780ti 3GB.

At the same time, the mainstream res started slowly moving to 1440p as Korean IPS panels were cheap overseas and many enthusiasts imported one. This heavily increased VRAM demands, alongside the consoles being released with 6GB to address, which meant mainstream would quickly move to higher VRAM demands, and it happened across just 1,5 generation of GPUs, even in the Nvidia camp the VRAM almost doubled across the whole stack, and then doubled again with Pascal. That is why people are liable to think AMD cards 'aged well' and Nvidia cards lost performance over time. This culminated in the release of the 3.5GB 'fast' VRAM GTX 970. That little bit of history ALSO underlines why AMD now releases a 16GB HBM card. They are banking on the idea that people THINK it might double again over time, that is why you can find some people advocating the 16GB as a good thing for gaming. And of course the expenses of having to alter the card.

If any supporter of Red needed confirmation bias, there it is :toast:. But it doesn't make it any less of an illusion that Nvidia drivers handicap performance over time.

It's not just VRAM, yeah VRAM requirements have risen significantly since then but don't forget you can easily remove the VRAM bottleneck by lowering the Texture quality.

970 was slower than the 780Ti at launch but it's not the case today, it's ahead actually, even in cases where the VRAM isn't a limiting factor.

I have a better advice, Buy a(or two) console.

Radeon VII has a lot vram only weakness in memory subsystem is it has quite low ROPs count. In normal tasks that is more than enough, but using MSAA can really tank the performance. Luckily for AMD MSAA is dying breed, fewer and fewer games are supporting that AA method anymore.

I'd focus on optimizations for UE4 next.

Nvidia is working closely with ue4 right now. I don't think AMD would risk working on optimizations on ue4 then have all the labor gone by a patch that would let AMD cards fall behind again. Just like Nvidia did with the dx11 HAWX game.

www.computerbase.de/2018-07/hdr-benchmarks-amd-radeon-nvidia-geforce/2/#diagramm-destiny-2-3840-2160

when exactly ?