Wednesday, September 2nd 2020

NVIDIA Announces GeForce Ampere RTX 3000 Series Graphics Cards: Over 10000 CUDA Cores

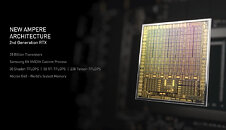

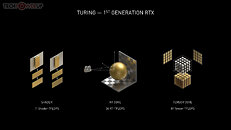

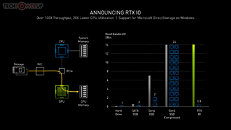

NVIDIA just announced its new generation GeForce "Ampere" graphics card series. The company is taking a top-to-down approach with this generation, much like "Turing," by launching its two top-end products, the GeForce RTX 3090 24 GB, and the GeForce RTX 3080 10 GB graphics cards. Both cards are based on the 8 nm "GA102" silicon. Join us as we live blog the pre-recorded stream by NVIDIA, hosted by CEO Jen-Hsun Huang.Update 16:04 UTC: Fortnite gets RTX support. NVIDIA demoed an upcoming update to Fortnite that adds DLSS 2.0, ambient occlusion, and ray-traced shadows and reflections. Coming soon.Update 16:06 UTC: NVIDIA Reflex technology works to reduce e-sports game latency. Without elaborating, NVIDIA spoke of a feature that works to reduce input and display latencies "by up to 50%". The first supported games will be Valorant, Apex Legends, Call of Duty Warzone, Destiny 2 and Fortnite—in September.Update 16:07 UTC: Announcing NVIDIA G-SYNC eSports Displays—a 360 Hz IPS dual-driver panel that launches through various monitor partners in this fall. The display has a built-in NVIDIA Reflex precision latency analyzer.Update 16:07 UTC: NVIDIA Broadcast is a brand new app available in September that is a turnkey solution to enhance video and audio streaming taking advantage of the AI capabilities of GeForce RTX. It makes it easy to filter and improve your video, add AI-based backgrounds (static or animated), and builds on RTX Voice to filter out background noise from audio.Update 16:10 UTC: Ansel evolves into Omniverse Machinima, an asset exchange that helps independent content creators to use game assets to create movies. Think fan-fiction Star Trek episodes using Star Trek Online assets. Beta in October.Update 16:15 UTC: Updates to the AI tensor cores and RT cores. In addition to more numbers of RT- and tensor cores, the 2nd generation RT cores and 3rd generation tensor cores offer higher IPC. Making ray-tracing have as little performance impact as possible appears to be an engineering goal with Ampere.Update 16:18 UTC: Ampere 2nd Gen RTX technology. Traditional shaders are up by 270%, raytracing units are 1.7x faster and the tensor cores bring a 2.7x speedup.Update 16:19 UTC: Here it is! Samsung 8 nm and Micron GDDR6X memory. The announcement of Samsung and 8 nm came out of nowhere, as we were widely expecting TSMC 7 nm. Apparently NVIDIA will use Samsung for its Ampere client-graphics silicon, and TSMC for lower volume A100 professional-level scalar processors.Update 16:20 UTC: Ampere has almost twice the performance per Watt compared to Turing!Update 16:21 UTC: Marbles 2nd Gen demo is jaw-dropping! NVIDIA demonstrated it at 1440p 30 Hz, or 4x the workload of first-gen Marbles (720p 30 Hz).Update 16:23 UTC: Cyberpunk 2077 is playing big on the next generation. NVIDIA is banking extensively on the game to highlight the advantages of Ampere. The 200 GB game could absorb gamers for weeks or months on end.Update 16:24 UTC: New RTX IO technology accelerates the storage sub-system for gaming. This works in tandem with the new Microsoft DirectStorage technology, which is the Windows API version of the Xbox Velocity Architecture, that's able to directly pull resources from disk into the GPU. It requires for game engines to support the technology. The tech promises a 100x throughput increase, and significant reductions in CPU utilization. It's timely as PCIe gen 4 SSDs are on the anvil.Update 16:26 UTC: Here it is, the GeForce RTX 3080, 10 GB GDDR6X, running at 19 Gbps, 238 tensor TFLOPs, 58 RT TFLOPs, 18 power phases.Update 16:29 UTC: Airflow design. 90 W more cooling performance than Turing FE cooler.Update 16:30 UTC: Performance leap, $700. 2x as fast as RTX 2080, available September 17. Up to 2x faster than the original RTX 2070.Update 17:05 UTC: GDDR6X was purpose-developed by NVIDIA and Micron Technology, which could be an exclusive vendor of these chips to NVIDIA. These chips use the new PAM4 encoding scheme to significantly increase data-rates over GDDR6. On the RTX 3090, the chips tick at 19.5 Gbps (data rates), with memory bandwidths approaching 940 GB/s.Update 16:31 UTC: RTX 3070, $500, faster than RTX 2080 Ti, 60% faster than RTX 2070, available in October. 20 shader TFLOPs, 40 RT TFLOPs, 163 tensor cores, 8 GB GDDR6Update 16:33 UTC: Call of Duty: Black Ops Cold War is RTX-on.Update 16:35 UTC: RTX 3090 is the new TITAN. Twice as fast as RTX 2080 Ti, 24 GB GDDR6X. The Giant Ampere. A BFGPU, $1500 available from September 24. It is designed to power 60 fps at 8K resolution, up to 50% faster than Titan RTX.

Update 16:43 UTC: Wow, I want one. On paper, the RTX 3090 is the kind of card I want to upgrade my monitor for. Not sure if a GPU ever had that impact.Update 16:59 UTC: Insane CUDA core counts, 2-3x increase generation-over-generation. You won't believe these.Update 17:01 UTC: GeForce RTX 3090 in the details. Over Ten Thousand CUDA cores!Update 17:02 UTC: GeForce RTX 3080 details. More insane specs.Update 17:03 UTC: The GeForce RTX 3070 has more CUDA cores than a TITAN RTX. And it's $500. Really wish these cards came out in March. 2020 would've been a lot better.Here's a list of the top 10 Ampere features.

Update 19:22 UTC: For a limited time, gamers who purchase a new GeForce RTX 30 Series GPU or system will receive a PC digital download of Watch Dogs: Legion and a one-year subscription to the NVIDIA GeForce NOW cloud gaming service.

Update 19:47 UTC: All Turing cards support HDMI 2.1. The increased bandwidth provided by HDMI 2.1 allows, for the first time, a single cable connection to 8K HDR TVs for ultra-high-resolution gaming. Also supported is AV1 video decode.

Update 20:06 UTC: Added the complete NVIDIA presentation slide deck at the end of this post.

Update Sep 2nd: We received following info from NVIDIA regarding international pricing:

Update 16:43 UTC: Wow, I want one. On paper, the RTX 3090 is the kind of card I want to upgrade my monitor for. Not sure if a GPU ever had that impact.Update 16:59 UTC: Insane CUDA core counts, 2-3x increase generation-over-generation. You won't believe these.Update 17:01 UTC: GeForce RTX 3090 in the details. Over Ten Thousand CUDA cores!Update 17:02 UTC: GeForce RTX 3080 details. More insane specs.Update 17:03 UTC: The GeForce RTX 3070 has more CUDA cores than a TITAN RTX. And it's $500. Really wish these cards came out in March. 2020 would've been a lot better.Here's a list of the top 10 Ampere features.

Update 19:22 UTC: For a limited time, gamers who purchase a new GeForce RTX 30 Series GPU or system will receive a PC digital download of Watch Dogs: Legion and a one-year subscription to the NVIDIA GeForce NOW cloud gaming service.

Update 19:47 UTC: All Turing cards support HDMI 2.1. The increased bandwidth provided by HDMI 2.1 allows, for the first time, a single cable connection to 8K HDR TVs for ultra-high-resolution gaming. Also supported is AV1 video decode.

Update 20:06 UTC: Added the complete NVIDIA presentation slide deck at the end of this post.

Update Sep 2nd: We received following info from NVIDIA regarding international pricing:

- UK: RTX 3070: GBP 469, RTX 3080: GBP 649, RTX 3090: GBP 1399

- Europe: RTX 3070: EUR 499, RTX 3080: EUR 699, RTX 3090: EUR 1499 (this might vary a bit depending on local VAT)

- Australia: RTX 3070: AUD 809, RTX 3080: AUD 1139, RTX 3090: AUD 2429

502 Comments on NVIDIA Announces GeForce Ampere RTX 3000 Series Graphics Cards: Over 10000 CUDA Cores

I never said CPU does the computation of the GPU line by line. That is something you just invented somehow. You just can't stop lying about what I said, you really don't give a f*** it seems.

The CPU can give instructions to the GPU and the GPU will actually compute those instructions. The CPU doesn't need to do it, but it does need to orchestrate what the GPU does. That is what that program is doing that someone wrote in C. The program itself doesn't do anything either, it's just the instructions for the CPU and the CPU passes those instructions on to the GPU to compute whatever that program wants it to compute.

If you knew something about C you would know it gets compiled down to Assembly language and then down to Machine code for x86 CPUs. Assembly usually just knows one instruction set, so it can't just run on anything you would like.

You are only projecting your own lack of knowledge on to others. You don't seem to be that bright either, literally everything you said was wrong so far and even others have corrected you, it's actually hard for me to remember encountering someone as willfully ignorant or even just as purposefully deceptive as you on here before.

Stop, you are the laughing stock of everyone reading this.

YOU said it runs line by line on the GPU. Then I replied that's garbage / totally wrong. I said it runs on both every time and you don't seem to get that simple principle. You have posted total non-sense over and over again.

The CPU is still always the host processor, it orchestrates what the GPU has to do. The program just doesn't purely run on the GPU, but also on the CPU. You have no sense of the abstraction happing with that C program. I'm still accurate on that, despite your silly attempts to frame this otherwise. You make extremely illogical points, so that won't happen either way. But somehow you are still trying.

IT'S NOT A C PROGRAM. It's a GLSL shader using a C syntax style language, I wrote that as clear as possible, of course you don't have any idea of what that means. That's why you don't understand any of this either. This is what I am trying to show you.

Let's sum up:

You were wrong on the TFLOPS argument.

You were wrong on the general-purpose argument.

You are still wrong on the C program argument, which btw. you stared and which has nothing at all to do with this whole discussion.

Or what did this have to do with anything now? You wrote this:Of course they run on specialized hardware. It's called a Shading Unit inside a GPU. That's definitely as specialized as it gets, my friend. And there is no such thing as a "shading language", if it's written in C. C is an all-purpose language, no a shading one.... Whatever that is even supposed to mean.

Now stop this completely silly discussion. But you just don't seem to know when to stop, do you?

You can't figure that out even now ? Read back that comment.I was correct about all of them.

More TFLOPS indicate more performance.

GPUs are general purpose, that's why they are used for all sorts of things besides graphics. That's also why they're capable of being GPGPU (General Purpose Graphics Processing Units). It's in the name.

Thoroughly explained why, can you do the same ?en.wikipedia.org/wiki/OpenGL_Shading_Language

Just for how long will you make me do this ? Have I not proven you wrong enough times ? I feel like a bully.

You're gonna use that cognitive dissonance thing and tell me that you never said that, right ?

You haven't answered the question of how that language (C or not, it doesn't matter) purely runs on the GPU. Everything has to go through the CPU at some point, GPU and CPU work together constantly and for every process, they do to reach the result of a game on the screen. That's why you still need a CPU to play games. We wouldn't even need a CPU, if you could just program on the GPU. It's definitely not how it works.

And either way, what is this point even trying to accomplish? What are you trying to reach with this exact point, besides all the other points that you were wrong on? You still haven't responded to this, so I'll quote you again:Are you actually saying Shading Units aren't specialized hardware when it's literally in the name??? :kookoo::kookoo::kookoo:

en.wikipedia.org/wiki/OpenGL_Shading_LanguageThat you know nothing, you are a compulsive liar, and really, really stubborn. It wasn't my initial goal, but alas.

en.wikipedia.org/wiki/Unified_shader_modelAgain, totally projecting. What was your initial goal other than presenting how much you know about shaders but not the overall picture of how a computer works? I don't need to know how everything works because I know how abstraction in computer architecture works, that's the point of having it. So, you still haven't told me about your initial goal. It seems to be that you just wanted to talk s*** at other members of the forum and nothing else. You are truthfully a sad and silly human being. I kind of pitty you that you had to go through this length to defend a useless point that doesn't even further anything about the discussion. You seem to just vent because something went wrong in your life?

Now, you still haven't answered why you even wrote that comment about languages not running on specialized hardware, which they actually can do with the help of the CPU, since it literally had nothing to do with anything being discussed. It's not relevant to the specialized hardware point that you were wrong about. You can still have specialized hardware, like Shader Units or TMUs or ROPs... It's also not relevant to the TFLOPS argument that you were also wrong about. So what was it even relevant for, you silly goose? Tell me, I asked at least 3 times already and you can't answer, but I can continue asking. :laugh: :laugh: :laugh:Sure I am. I just have a CS degree for nothing. But you are the expert... Probably you tried programming a game once and didn't even work out and now you like to play yourself up as someone of importance here. It's not working for you, sorry to be the one who tells you the truth.I'm not the one who needs to cry, but people who are around you. And they probably also need to run from what kind of pathetic lier and wannabe you are. I won't even say troll because you somehow manage to be way beneath that.

Wonder what will AMD do now, they have to launch a big GPU, we need the power to drive to run games at 4k.

Oh so from what ive read, each core can do INT+FP, FP+FP vs previous generation of INT+ FP only? Its still 2 core inside but only one when doing specific operations?

www.nvidia.com/en-us/geforce/news/geforce-gtx-1660-ti-advanced-shaders-streaming-multiprocessor/

Basically 2080 Ti has 13.5TFLOPs of FP32 and 13.5TFLOPs of INT32, if a game fully leverage both FP32 and INT32 instructions then 2080 Ti would effectively have 27TFLOPs of combined FP+INT. So depends on how much INT32 instructions are used, 2080 Ti's effective TFLOPs range from 13.5 to 27. From the SoTR example, 38 out 100 instructions are INT, that means effectively 2080 Ti has 13.5 + 13.5(x 38/62) = 21.77 TFLOPs

Meanwhile the 20TFLOPs of the 3070 are fixed (FP32 or FP32+INT32) and does not depend on the game engine's usage of INT instructions.

@Vya Domus and @PowerPC You guys forgot that 2080 Ti can do concurrent FP32+INT32 so effectively 2080 Ti has 27TFOPS in rare instances.

If I ever build a desktop again, I would never mess with or cheap out on the power supply, I would buy everything else used but the power supply, I would buy new. So I wouldnt push it.

A 3080 is definitely the card I was waiting for!