Saturday, September 19th 2020

NVIDIA Readies RTX 3060 8GB and RTX 3080 20GB Models

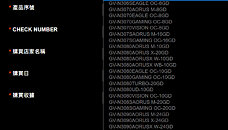

A GIGABYTE webpage meant for redeeming the RTX 30-series Watch Dogs Legion + GeForce NOW bundle, lists out eligible graphics cards for the offer, including a large selection of those based on unannounced RTX 30-series GPUs. Among these are references to a "GeForce RTX 3060" with 8 GB of memory, and more interestingly, a 20 GB variant of the RTX 3080. The list also confirms the RTX 3070S with 16 GB of memory.

The RTX 3080 launched last week comes with 10 GB of memory across a 320-bit memory interface, using 8 Gbit memory chips, while the RTX 3090 achieves its 24 GB memory amount by piggy-backing two of these chips per 32-bit channel (chips on either side of the PCB). It's conceivable that the the RTX 3080 20 GB will adopt the same method. There exists a vast price-gap between the RTX 3080 10 GB and the RTX 3090, which NVIDIA could look to fill with the 20 GB variant of the RTX 3080. The question on whether you should wait for the 20 GB variant of the RTX 3080 or pick up th 10 GB variant right now, will depend on the performance gap between the RTX 3080 and RTX 3090. We'll answer this question next week.

Source:

VideoCardz

The RTX 3080 launched last week comes with 10 GB of memory across a 320-bit memory interface, using 8 Gbit memory chips, while the RTX 3090 achieves its 24 GB memory amount by piggy-backing two of these chips per 32-bit channel (chips on either side of the PCB). It's conceivable that the the RTX 3080 20 GB will adopt the same method. There exists a vast price-gap between the RTX 3080 10 GB and the RTX 3090, which NVIDIA could look to fill with the 20 GB variant of the RTX 3080. The question on whether you should wait for the 20 GB variant of the RTX 3080 or pick up th 10 GB variant right now, will depend on the performance gap between the RTX 3080 and RTX 3090. We'll answer this question next week.

157 Comments on NVIDIA Readies RTX 3060 8GB and RTX 3080 20GB Models

3080 10GB is going to be a mighty fine, super future proof 1440p product. 4K? Nope. Unless you like it faked with blurry shit all over the place in due time. Its unfortunate though that 1440p and 4K don't play nicely together with res scaling on your monitor.

Which is why DirectStorage exists, as its sole purpose is to make games quit this stupid behavior through allowing for faster streaming, incentivizing streaming in what is actually needed just before it's needed rather than accounting for every possible eventuality well before it might possibly happen as they do now. Given how important consoles are for game development, DS adoption will be widespread in the near future - well within the useful lifetime of the 3080. And VRAM allocations will as such shrink with its usage. Is it possible developers will use this now-free VRAM for higher quality textures and the like? Sure, that might happen, but it will take a lot of time. And it will of course increase the stress on the GPU's ability to process textures rather than its ability to keep them available, moving the bottleneck elsewhere. Also, seeing how Skyrim has already been remastered, if developers patched in DS support to help modders it really wouldn't be that big of a surprise.

Besides, using the most notoriously heavily modded game in the world to exemplify realistic VRAM usage scenarios? Yeah, that's representative. Sure. And of course, mod developers are focused on and skilled at keeping VRAM usage reasonable, right? Or maybe, just maybe, some of them are only focused at making their mod work, whatever the cost? I mean, pretty much any game with a notable amount of graphical mods can be modded to be unplayable on any GPU in existence. If that's your benchmark, no GPU will ever be sufficient for anything and you might as well give up. If your example of a representative use case is an extreme edge case, then it stands to reason that your components also need configurations that are - wait for it - not representative of the overall gaming scene. If your aim is to play Skyrim with enough mods that it eats VRAM for breakfast, then yes, you need more VRAM than pretty much anyone else. Go figure.

And again: you are - outright! - mixing up the two questions from my post, positing a negative answer to a) as also necessitating a negative answer to b). Please stop doing that. They are not the same question, and they aren't even directly related. All GPUs require you to lower settings to keep using them over time. Exactly which settings and how much depends on the exact configuration of the GPU in question. This does not mean that the GPU becomes unusable due to a singular bottleneck, as has been the case with a few GPUs over time. If 10GB of VRAM forces you to ever so slightly lower the settings on your heavily modded Skyrim build - well, then do so. And keep playing. It doesn't mean that you can't play, nor does it mean that you can't mod. Besides, if (going by your screenshot) it runs at 60fps on ... a 2080Ti? 1080Ti?, then congrats, you can now play at 90-120fps on the same settings? Isn't that pretty decent?

If 10G or 11GB isnt enough for a certain title, just turn the friggin textures to ultra. I cant wait to see people cry over 'can it run crysis?' mode because waaaaah, max settings waaaah.

As for "creative" ... sure, they could make arbitrarily higher VRAM amount versions by putting double density chips on some pad but not others. Would that help anything? Likely not whatsoever.

Any time games need to resort to swapping and they cannot do that within the space of a single frame update, you will suffer stutter or frametime variance. I've gamed too much to ignore this and I will never get subpar VRAM GPUs again. The 1080 was perfectly balanced that way, always has an odd GB to spare no matter what you throw at it. This 3080, most certainly is not balanced the same way. That is all, and everyone can do with that experience based info whatever they want ;) I'll happily lose 5 FPS average for a stutter free experience.Node has everything to do with VRAM setups because it also determines power/performance metrics and those relate directly to the amount of VRAM possible and what power it draws. In addition, the node directly weighs in on the yield/cost/risk/margin balance, as do VRAM chips. Everything is related.

Resorting to alternative technologies like DirectStorage and whatever Nvidia is cooking up itself is all well and good, but that reeks a lot like DirectX12's mGPU to me. We will see it in big budget games when devs have the financials to support it. We won't see it in the not as big games and... well... those actually happen to be the better games these days - hiding behind the mainstream cesspool of instant gratification console/MTX crap. Not as beautifully optimized, but ready to push a high fidelity experience in your face. The likes of Kingdom Come Deliverance, etc.

In the same vein, I don't want to get forced to rely on DLSS for playable FPS. Its all proprietary and per-game basis and when it works, cool, but when it doesn't, I still want to have a fully capable GPU that will destroy everything.Exactly, I always get the impression I'm discussion practical situations with theorycrafters when it comes to this. There are countless examples of situations that go way beyond the canned review benchmarks and scenes.

Allocated VRAM will be used at some point. All of you seem to think that applications just allocate buffers randomly for no reason at all to fill up the VRAM and then when it gets close to the maximum amount of memory available it all just works out magically such that the memory in use is always less than the one being allocated.

A buffer isn't allocated now and used an hour later, if an application is allocating a certain quantity of memory then it's going to be used pretty soon. And if the amount that gets allocated comes close to the maximum amount of VRAM available then it's totally realistic to expect problems.

News flash, Ultra details are not meant for current gen hardwares at all, they are there so that people think that their current hardwares suck.

There are always ways to kill the performance of any GPU, regardless of what their capabilites are. Games from 20 years ago, hell let path-traced them and kill every single GPU out there LOL.

Everything has compromise, you just gotta be aware of it and voila, problem gone. 700usd for 3080 10GB is already freaking sweet, 900usd for 3080 20GB ? heck no, even if 4K 120hz become the norm in 2 years (which it won't). That extra money will make better sense when spent on other component of the PC.

I'm glad we arrived at the point where we agree 10GB 3080's are a compromise to begin with.

Having an extra 2GB would not make a 770 4GB be able to deliver 60fps at 1080p Ultra in games where the 2GB can't though, so it's moot point.

Having extra VRAM only make sense when you already have the best possible configuration, for example 780 Ti with 6GB VRAM instead of 3GB would make sense (yeah I know the original Titan card).

If you can spend a little more, make it count at the present time, not sometime into the future.

BTW I upgraded to the R9 290 from my old GTX 680, the R9 290 totally destroyed 770 4GB even then though.

At the same time, a 3090 with 12GB is actually better balanced than the 3080 with 10. If anything it'd make total sense to follow your own advice and double that 3080 to 20GB as it realistically really is the top config. I might be wrong here, but I sense a healthy dose of cognitive dissonance in your specific case? You even provided your own present-day example of software (FS2020) that already surpasses 10GB... you've made your own argument.

All I am saying is doubling down on VRAM with second tier or third tier GPU doesn't make any sense, when you can spend a little more and get the next tier of GPU. 3070 16GB at 600usd, why not just buy the 3080 10GB ? buying 3080 20GB ? yeah just use that extra USD for better RAM where it make a difference in 99% of the time.

I'm fine with 3090 24GB though, which is the highest performance GPU out there. Its only weakness is the price :D.

Remember that Turing is also endowed with a much bigger cache.

That makes 8GB VRAM of Ampere behave very different from 8GB of Turing, just saying.

Note also that stutter is back with Turing - we've had several updates and games showing us that. Not unusual for a new gen, but still. They need to put work into preventing it. Latency modes are another writing on the wall. We never needed them... ;)

I'm counting all those little tweaks they do and the list is getting pretty damn long. That's a lot of stuff needing to align for good performance.

If you are bothered with perf/usd then extra VRAM should be your no go zone.

If you aren't bothered with perf/usd then knock yourself out with the 3090 :D, which I assume the people buying them already have the best CPU/RAM combo, otherwise it's a waste...

Edit: I'm playing on a 2070 Super MaxQ laptop (stuck in mandatory quarantine) with the newest driver, HAGS ON and games are butterly smooth. Which game are you talking about, perhaps I can check.

As time passes and events happen (player movement, etc.), unused data is ejected from memory to allow for pre-caching of the new possibilities afforded by the new situation. If not, then pretty much the entire game would need to live in VRAM. Which of course repeats for as long as the game is running. Some data might be kept and not cleared out as it might still be relevant for a possible future use, but most of it is ejected. The more possible scenarios that are pre-loaded, the less of this data is actually used for anything. Which means that the slower the expected read rate of the storage medium used, the lower the degree of utilization becomes as slower read rates necessitate earlier pre-caching, expanding the range of possible events that need to be accounted for.

Some of this data will of course be needed later, but at that point it will long since have been ejected from memory and pre-loaded once again. Going by Microsoft's data (which is to be very accurate, they do after all make DirectX, so they should have the means to accurately monitor this), DirectStorage improves the degree of utilization of data in VRAM by 2.5x. Assuming they achieved a 100% utilization rate (which they obviously didn't, as that would require their caching/streaming algorithms to be effectively prescient), that means at the very best their data showed a 40% rate of utilization before DirectStorage - i.e. current DirectX games are at most making use of 40% of the data they are pre-loading before clearing it out. If MS achieved a more realistic rate of utilization - say 80% - that means the games they started from utilized just 32% of pre-loaded data before clearing it out. There will always be some overhead, so going by this data alone it's entirely safe to say based on this information that current games cache a lot more data than they use.

And no, this obviously isn't happening on timescales anywhere near an hour - we're talking millisecond time spans here. Pre-caching is done for perhaps the next few seconds, with data ejection rolling continuously as the game state changes - likely frame-by-frame. That's why moving to SSD storage as the default for games is an important step in improving this - the slow seek times and data rates of HDDs necessitate multi-second pre-caching, while adopting even a SATA SSD as the baseline would dramatically reduce the need for pre-caching.

And that is the point here: DS and SSD storage as a baseline will allow for less pre-caching, shortening the future time span for which the game needs possibly necessary data in VRAM, thus significantly reducing VRAM usage. You obviously can't tell which of the pre-loaded data is unnecessary until some other data has been used (if you could, there would be no need to pre-load it!). The needed data might thus just as well live in that ~1GB exceeding a theoretical 8GB VRAM pool for that Skyrim screenshot as in the 8GB that are actually there. But this is exactly why faster transfer rates help alleviate this, as you would then need less time to stream in the necessary data. If a player is in an in-game room moving towards a door four seconds away, with a three-second pre-caching window, data for what is beyond the door will need to start streaming in in one second. If faster storage and DirectStorage (though the latter isn't strictly necessary for this) allows the developers to expect the same amount of data to be streamed in in, say, 1/6th of the time - which is reasonable even for a SATA SSD given HDD seek times and transfer speeds - that would mean data streaming doesn't start until 2.5s later. For that time span, VRAM usage is thus reduced by as much as whatever amount of data was needed for the scene beyond the door. And ejection can also be done more aggressively for the same reason, as once the player has gone through the door the time needed to re-load that area is similarly reduced. Thus, the faster data can be loaded, the less VRAM is needed at the same level of graphical quality.That is only true if the base amount of VRAM becomes an insurmountable obstacle which cannot be circumvented by lowering settings. Which is why this is applicable to something like a 2GB GPU, but won't be in the next decade for a 10GB one. The RX 580 is an excellent example, as the scenarios in which the 4GB cards are limited are nearly all scenarios in which the 8GB one also fails to deliver sufficient performance, necessitating lower settings no matter what. This is of course exacerbated by reviewers always testing at Ultra settings, which typically increase VRAM usage noticeably without necessarily producing a matching increase in visual quality. If the 4GB one produces 20 stuttery/spiky frames per second due to a VRAM limitation but the 8GB one produces 40, the best thing to do (in any game where frame rate is really important) would be to lower settings on both - in which case they are likely to perform near identically, as VRAM use drops as you lower IQ settings.Do the stutters kick in immediately once you exceed the size of the framebuffer? Or are you comparing something like an 8GB GPU to an 11GB GPU at settings allocating 9-10GB for those results? If the latter, then that might just as well be indicative of a poor pre-caching system (which is definitely not unlikely in an old and heavily modded game).Yes, everything is related, but you presented that as a causal relation, which it largely isn't, as there are multiple steps in between the two which can change the outcome of the relation.From what's been presented, DS is not going to be a complicated API to implement - after all, it's just a system for accelerating streaming and decompression of data compressed with certain algorithms. It will always take time for new tech to trickle down to developers with less resources, but the possible gains from this makes it a far more likely candidate for adoption than somethinglike DX12 mGPU - after all, reducing VRAM utilization can directly lead to less performance tuning of the game, lowering the workload on developers.

This tech sounds like a classic example of "work smart, not hard", where the classic approach has been a wildly inefficient brute-force scheme but this tech finally seeks to actually load data into VRAM in a smart way that minimizes overhead.I entirely agree on that, but it's hardly comparable. DLSS is proprietary and only works on hardware from one vendor on one platform, and needs significant effort for implementation. DS is cross-platform and vendor-agnostic (and is likely similar enough in how it works to the PS5's system that learning both won't be too much work). Of course a system not supporting it will perform worse and need to fall back to "dumb" pre-caching, but that's where the baseline established by consoles will serve to raise the baseline featureset over the next few years.

With RTX 3080's 760 GB/s bandwidth, if you target 144 FPS, that leaves 5.28 GB of bandwidth per frame if you utilize it 100% perfectly. Considering most games use multiple render passes, they will read the same resources multiple times and will read temporary data back again, I seriously doubt a game will use more than ~2 GB of unique texture and mesh data in a frame, so why would RTX 3080 need 20 GB of VRAM then?

As you were saying, computational and bandwidth requirements grow with VRAM usage, often they grow even faster too.Any time data needs to be swapped from system memory etc., there will be a penalty, there no doubt about that. It's a latency issue, no amount of bandwidth for PCIe or SSDs will solve this. So you're right so far.

Games have basically two ways of managing resources;

- No active management - everything is allocated during loading (still fairly common). The driver may swap if needed.

- Resource streamingThis is where I have a problem with your reasoning, where is the evidence of this GPU being unbalanced?

3080 is two generations newer than 1080, it has 2 GB more VRAM, more advanced compression, more cache and a more advanced design which may utilize the VRAM more efficiently. Where is your technical argument for this being less balanced?

I'll say the truth is in benchmarking, not in anecdotes about how much VRAM "feels right". :rolleyes:

All data has to be there, it doesn't matter that you are rendering only a portion of a scene, all assets that need to be in that scene must be already in memory. They are never loaded based on the possibility of something happening or whatever.

No thank you with your 640kb VRAM is all you'll ever need...

In this case, 12GB would've been great for the 3080, but potentially the bandwidth would've been the same as the 3090, which would have not created enough segmentation.

Thus they had to chose 10GB, which can already be limiting in some present games (e.g. battlefield 5 4Kmax with RT on), with the option of launching a different tier later on, if they feel the card is not competitive enough.Nvidia doesn't panic, but they plan ahead and take competition very seriously. That's why they win so often, even sometimes when they don't have the best performance or the best price-performance ratio. They rarely leave theùselves open and that's how any well-organized company should be.